GCP Shared VPC Subnets And Host Project Design

Originally

ADR-0031_GCP_Shared_VPC_Subnets_and_Host_Project_Design (v6) · Source on Confluence ↗Shared VPC Subnets and Host Project Design

Status: Accepted

Deciders: ryan.cullison@droneup.com, sybil.melton@droneup.com, eric.brookman@droneup.com

Date: Apr 14, 2023

Technical Story

Document decisions for the host projects and subnet design in Shared VPC

Context and Problem Statement

[Shared VPC](confluence-title://PE/ADR17: GCP Network Architecture) design must be decided.

Every league will have its own service project, its own GKE clusters.

- The secondary address ranges for Pods, Services and nodes must not overlap

- Ranges for nodes can be expanded or added onto

- Ranges for pods can be expanded with additional node pools

- Ranges for services cannot be expanded.

GCP Quota for subnetwork ranges per VPC: 300

Cloud Interconnect for Cato can only be assigned to one VPC

- Using a dedicated VPC for the interconnect allows us to use tags in the global network firewall policy instead of IP range based rules in separate VPC or hierarchical Org rules.

Decision Drivers

- Ensuring GCP quotas are not reached

- Scale

- Complexity

Considered Options

Host Project

- Single

- Multiple

Subnets per environment

- Large, shared subnets per environment

- Per project subnets

Decision Outcome

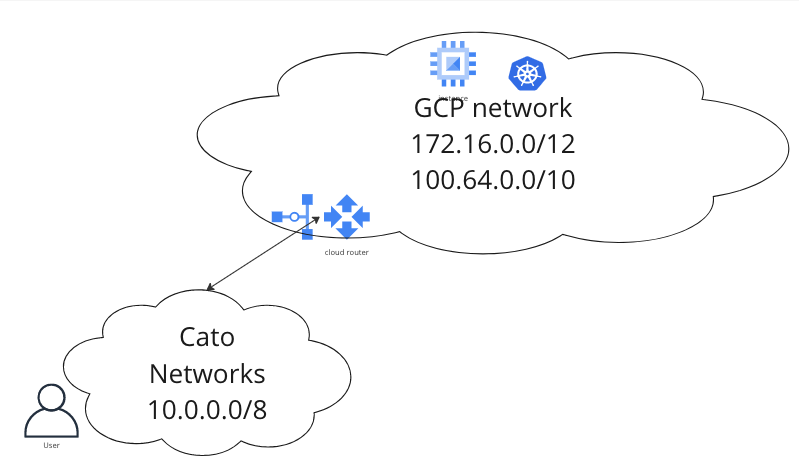

Leave 10.0.0.0/8 for enterprise sites (on the Cato network). Use 172.16.0.0/12 for primary subnets, secondary GKE services. Use 100.64.0.0/10 (RFC 6598) for pod ranges to ensure large non-overlapping subnets are available.

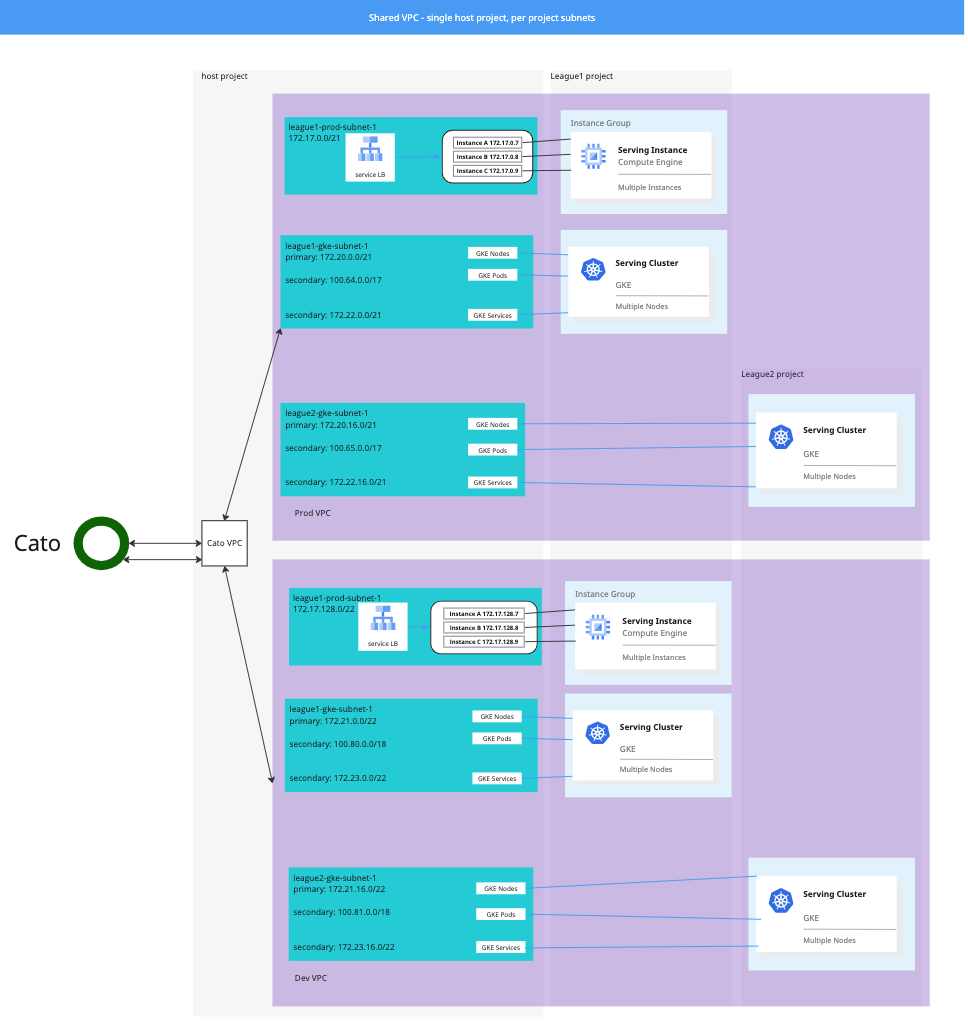

Single Host Project with per project subnets per environment

- Good, only one set of Cato interconnects is required

- Good, VPC Network Peering does not support transitive routing; that is, imported routes from other networks are not automatically advertised by Cloud Routers in your VPC network. Custom IP range advertisements from Cloud Routers in your VPC network are required share Cato routes to destinations in the peer network.

- Good, smaller ingress/egress infrastructure required

- Good, minimizes number of boundaries to manage

- Bad, Prod and Dev share Cato interconnect, potential for one environment to impact the other

Per project subnets per environment

Subnet ranges assigned per project, in Shared VPC for prod, dev environments.

Per VPC region: 10 Leagues * 4 subnet ranges = 40 subnet ranges * 3 regions (reserve central & west for expansion) = 120

- Good, Google’s recommended configuration for GKE

Example

| gke subnet | purpose | number available |

|---|---|---|

| 172.20.0.0/21 | primary, nodes | 2,044 nodes |

| 172.21.0.0/21 | secondary, services | 2,048 services |

| 100.64.0.0/17 | secondary, pods | 32,768 addresses |

Consequences/Tech Debt Incurred

Number of future projects could cause the VPCs to reach the 300 subnet range limit. If this is the case, additional Shared VPCs will need to be created and peered with the Cato VPC.

Pros and Cons of the Options

Multiple Host Projects

- Good, host project separation for Prod and Dev ensures changes can be staged in Dev prior to applying in Prod

- Good, Dev bandwidth will not impact Prod

- Bad, additional firewall operational cost

- Bad, Cato interconnect per host project is additional financial and operational cost

- Bad, separate ingress/egress infrastructure is additional financial and operational cost

Large, shared subnets per environment

Shared VPCs for prod, dev environments.

Per VPC region: 4 subnet ranges * 3 regions (reserve central & west for expansion) = 12

Good, smaller number of subnets

Good, no need to create new subnets for new projects

Bad, Not Google’s recommended configuration for GKE

- One cluster could use up IP space and impact another cluster

Example

| gke subnet | purpose | number available |

|---|---|---|

| 172.20.0.0/19 | primary, nodes | 8,188 nodes |

| 172.21.0.0/19 | secondary, services | 8,192 services |

| 100.64.0.0/14 | secondary, pods | 262,144 addresses |