Secure GCP Architecture

Originally

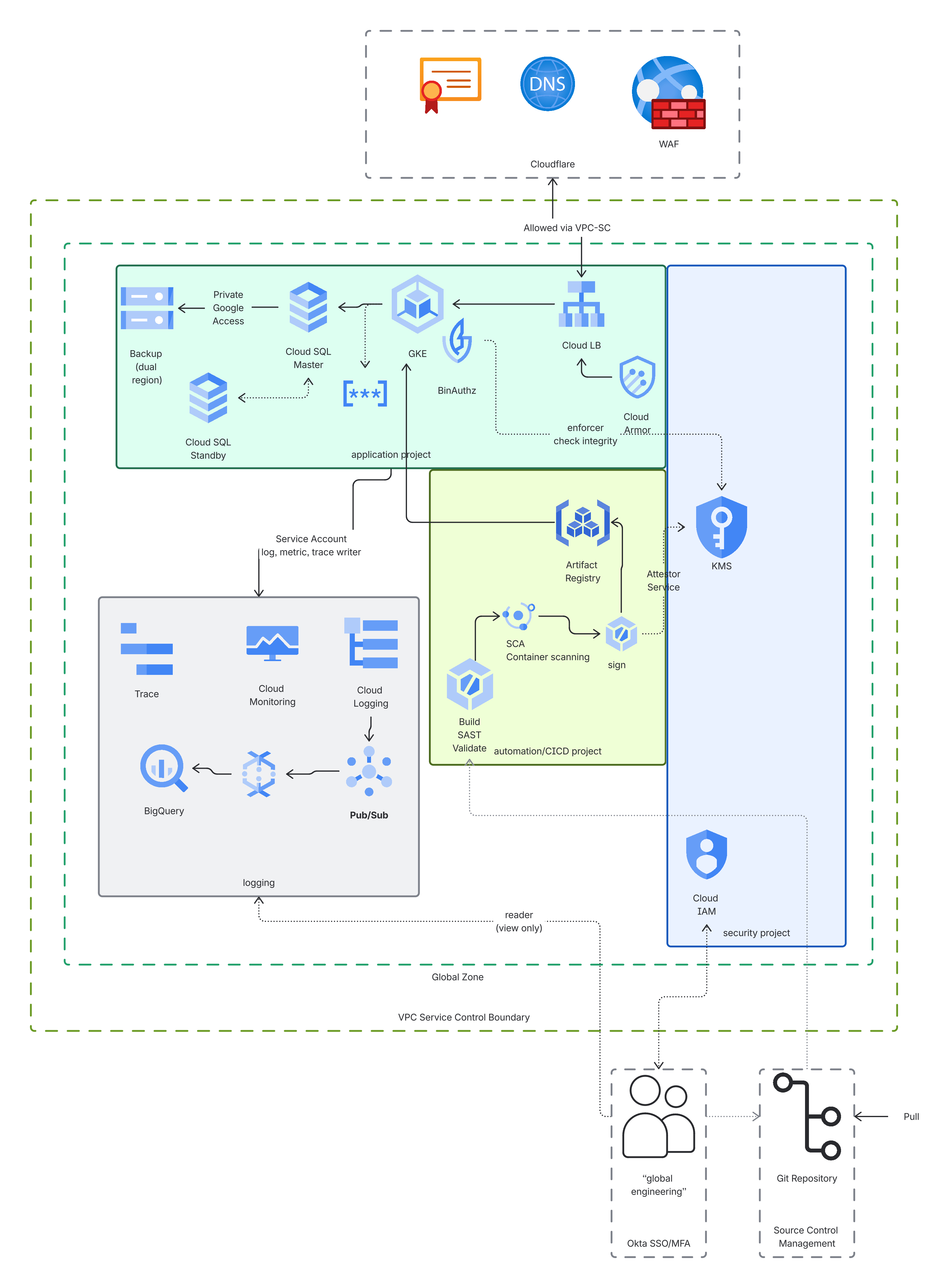

ADR-0131-Secure GCP Architecture (v18) · Source on Confluence ↗NIST 800-53 High Aligned GCP Architecture

| Traceability Links | |

|---|---|

| Jama Requirements | UERQ-SYS-1712 (FedRAMP Authorization) UERQ-SYS-1713 (SOC 2 Type II Compliance) UERQ-SYS-1726 (FedRAMP Boundary Ingestion) FAS security requirements (UERQ-SYS-1509 through UERQ-SYS-1512) |

| Jira Tasks | CORE-2566 |

Context

As our platform expands to support highly regulated industries such as civil aviation, there is a need for environment parity between our standard development areas and compliance-restricted zones. Currently, the lack of a standardized, “hardened” sandbox environment creates “deployment friction,” where the infrastructure and code in a general-purpose environment will encounter security policy violations if promoted to restricted production tiers.

Specifically, we need to address:

- Consistency Gap: Ensuring that the architectural topology (networking, compute, and identity gates) used by the global engineering team is a functional mirror of our most restricted environments.

- Security-by-Design: Moving away from “retrofitting” security at the end of the lifecycle and instead providing developers with a sandbox that enforces high-integrity defaults from the first line of code.

- Auditability: Establishing a clear, automated “Chain of Custody” for artifacts that can be traced back to verified requirements and security scans.

This decision is being made in the context of implementing amulti-zone architecture. We are moving toward a model where infrastructure is partitioned into discrete trust tiers.

This ADR focuses on the creation of a Secure Sandbox, which will satisfy strict security frameworks (such as NIST 800-53 High). The sandbox is designed to allow global collaboration and rapid iteration while ensuring that all outputs are compatible with a Restricted Production Environment used for high-impact data processing.

Decision

We are adopting a Security-First Cloud Architecture based on the GCP FedRAMP Quickstart blueprints. This establishes a “known-good” baseline for environments, ensuring that the Secure Sandbox mimics a compliance-restricted zone.

The specific architectural decisions are:

Google Cloud Platform (GCP)

We utilize GCP for its recognized security authorizations, such as P-ATO/FedRAMP High. Google’s authorized in-scope services ensure our secure products align with commercial capabilities. Google offers default encryption for data in transit and at rest, along with Customer Managed Encryption Keys (CMEK). Our organizational policies enforce continuous compliance with automatic guardrails that prevent unauthorized configuration errors.

Authorized Resource Catalog & Exception Process

We are adopting a “Verified-First” resource strategy. To maintain strict boundary separation, all personnel working within the Secure Sandbox are restricted to using the vetted GCP services.

The team is encouraged to use managed services that replace self-hosted infrastructure.

If an application requires a resource not currently in the “Approved” list (e.g., InfluxDB), the following process applies:

- Information Security will assess the service’s compliance status and its ability to integrate with our central logging and encryption (KMS) standards.

- If the service is not available as a managed GCP offering, it must be deployed as a hardened, scanned container within our GKE Autopilot environment.

- If a managed service or containerized approach is determined to be technically unfeasible, the team will evaluate a Marketplace-authorized third-party SaaS, provided it meets the required data boundary requirements.

Project Model

We are establishing a Partitioned Project Model for the Secure Sandbox. This structure ensures that every functional component (Logs, Keys, Build, App) is isolated by a hard IAM and networking boundary to meet Separation of Duties controls.

| Technical Decision | Functional Rationale | NIST Control |

| Sandbox Folder with strict Organization Policies | Serves as the high-integrity “container” for all workloads, enforcing residency. | SC-7 (Boundary Protection) |

| Separate logging/operations Project | Ensure evidence integrity during an incident. | AU-9 (Audit Integrity) |

| Separate KMS/security Project | Ensure that those who manage the application data cannot also manage the encryption keys. | AC-5 / SC-28 |

| Dedicated DevOps/automation Project | Centralizing all deployment logic. 1. Environment configurations are version-controlled, eliminating manual “configuration drift.” 2. Build runners are logically decoupled from the live application. If a runner is compromised, the attacker has no direct path into the production database. 3. Security scanners (SAST/SCA/DAST) are “shifted left” and integrated directly into the build pipeline, ensuring no artifact is promoted without passing. 4. Every deployment creates a permanent record of who authorized the change and what code was executed, simplifying the recertification process. | 1. CM-2 2. AC-6 3. SI-2 4. AU-12 |

| Generic Resource Naming | Obfuscates the specific purpose of resources (e.g., app-01-db vs tax-record-db) to limit reconnaissance potential. | SC-38 (Information Hiding) |

GKE Autopilot

We are shifting to GKE Autopilot for container orchestration. This change transfers the operational burden of cluster management and security patching to the provider. Autopilot is secure by default, as it blocks SSH and restricts node access.

Implementation of High-Integrity Deployment Gates

To ensure software and information integrity, we are implementing three core technical controls.

Binary Authorization requires that only container images signed by our trusted CI/CD pipeline can be deployed.

Kernel-Level Isolation (GKE Sandbox) employs gVisor-based isolation to enhance security for containerized workloads.

Workload Identity removes long-lived static credentials by mapping Kubernetes service accounts directly to IAM roles, strictly enforcing the principle of least privilege.

Automated Baseline Deployment

To maintain a consistent and high-integrity deployment lifecycle, we are standardizing on Google Infrastructure Manager (Infra Manager) for Infrastructure-as-Code (IaC) and Google Cloud Build for our CI/CD pipelines.

Key Drivers for this Decision:

- Terraform Cloud currently lacks the specific FedRamp authorizations (ATO) required for a compliance-restricted zones. Infra Manager is already vetted and governed by GCP’s security guardrails.

- Infra Manager allows us to continue using Terraform as our declarative language while benefiting from Google’s managed execution environment, providing a seamless transition for the team while satisfying strict Configuration Management (CM) controls.

- Public marketplaces (such as the GitHub Actions Marketplace) consist primarily of unvetted, third-party code. Executing these scripts within our pipeline introduces unacceptable risks to software integrity (NIST SI-7).

- Utilizing managed services removes the significant burden of provisioning, patching, and securing “Self-Hosted Runners.”

Service Accounts

We are standardizing on a Project-Local Identity Model. All Service Accounts (SAs) will be created and managed within the specific project where their associated resources reside.

Key Drivers for this Decision:

- By creating SAs in the local project, we eliminate cross-project “trust chains.” If a service account is compromised, its access is physically limited to its home project, preventing lateral movement across the secure boundary.

- Service Accounts are treated as a single unit with the resources they support. When a resource (e.g., a GKE cluster or a VM) is decommissioned via Terraform, its dedicated Service Account is deleted simultaneously. This prevents **"**Identity Bloat" and the risk of orphaned accounts (NIST AC-2).

- We are strictly prohibiting the creation and storage of service account

.jsonkeys. Instead, we will utilize Workload Identity (for GKE) and Service Account Impersonation. This eliminates the risk of credential leakage in source code or local storage (NIST SC-12). - Every distinct application component will have its own dedicated Service Account. We will not “recycle” identities across different services, ensuring an immutable audit trail for every action (NIST AU-12).

| Service Account Type | Location | Why? |

| GKE / App Identity | Workload Project | Blast Radius: Keeps app identities local to the app data. |

| KMS Admin | KMS Project | AC-5: Prevents app devs from managing their own encryption keys. |

| Log Processor | Logging Project | AU-9: Ensures the process that handles logs is outside the app boundary. |

| Terraform Deployer | DevOps Project | CM-3: Centralizes the “Authoritative Source” of changes. |

Networking

We are implementing a “Dark VPC” architecture. All Virtual Private Clouds (VPCs) in the Secure Sandbox will have zero direct public ingress or egress. All communication into and out of the environment must pass through authorized, identity-aware gateways.

Key Drivers for this Decision:

- Our workloads will communicate with Google APIs (like Cloud Storage or Secret Manager) using Private Google Access. This ensures that traffic never leaves the Google backbone and never touches the public internet (NIST SC-7).

- Applications that require external updates (e.g., pulling a standard OS patch) will do so via Cloud NAT with specific IP whitelisting. No resource will have a public IP address assigned to its interface.

- We are wrapping the entire project structure with VPC Service Controls (VPC-SC). This acts as a “Data Firewall” that prevents data exfiltration, even by authorized users. For example, a user cannot copy data from a Secure Sandbox bucket to a personal or “Global” bucket, even if they have the IAM permissions to do so (NIST AC-4).

- All service-to-service communication between projects (e.g., Automation to Workload) will occur over Private Service Connect (PSC) and Internal Load Balancers, ensuring cross-project traffic remains entirely on the private network. Because of this, Shared VPC will not be needed.

- We will utilize Private DNS Zones only. No internal resource names or IP addresses will be resolvable via public DNS, preventing reconnaissance and information leakage (NIST SC-8).

Tiered Connectivity & Trust-Based Data Flow

We are establishing a Tiered Connectivity Model to govern all data movement across our environment boundaries. This model categorizes every connection based on the “Trust Level” of the external entity and the direction of the initiation (Push vs. Pull).

By formalizing these tiers, we ensure the “Least Privilege Networking” principles required for our higher-impact zones.

Tier 1: Inbound Push Providers (External-to-Internal)

Trust Level: Low

External entities pushing data to the platform.

Security Controls (NIST IA-2, IA-3, SC-8)

- VPC-SC ingress policy must explicitly allow the external identity (User or Service Account) to “cross” the perimeter to reach specific protected resources.

- Operators authenticate via Operator Authentication Service.

- Connections are terminated at a Cloud Load Balancer; mutual TLS (mTLS) is required.

- Cloud Armor enforces rate limiting and WAF policies at the edge.

Tier 2: Outbound Pull Providers (Internal-to-External)

Trust Level: Medium-Low

For high-integrity data feeds (e.g., FlightAware, FAA SWIM) where DroneUp initiates the connection.

Security Controls (NIST SC-7, SC-12)

- Our services initiate outbound traffic via Cloud NAT; no unsolicited inbound traffic is permitted.

- Egress Firewall Rules and IP allowlisting restrict traffic to specific, vetted provider endpoints.

- VPC-SC egress policy explicitly allows the service account to “exit” the perimeter to reach specified external identities or resources. This prevents “data exfiltration” disguised as a legitimate API call.

- Provider credentials are managed via Centralized Secret Management with automated rotation.

Tier 3: Partner Interconnect (Dedicated Connectivity)

Trust Level: Medium-High

Logic: For enterprise partners requiring private, low-latency, or dedicated fiber connections.

Security Controls (NIST SC-7, AC-4)

- If the partner has their own GCP environment, a VPC-SC “Perimeter Bridge” is used. If they are purely on-prem, a VPC-SC Ingress Policy restricted to the Interconnect’s VLAN Attachment is required to prevent spoofing.

- Physical or logical isolation via Dedicated Cloud Interconnect or Partner Interconnect circuits.

- Private Service Connect (PSC) endpoints ensure service-level isolation (the partner only “sees” the specific API, not the whole network).

- Dedicated Service Accounts are used with strictly scoped permissions for each partner.

Tier 4: Internal Zone-to-Zone (Highest Integrity)

Trust Level: High (Trusted Local)

Logic: For traffic moving between project boundaries within our environment (e.g., DevOps to Staging, or App to Logging).

Security Controls (NIST AC-6, SC-7(5))

- All internal projects must be members of the same “Restricted Perimeter.” Any communication between them that is not explicitly defined as an internal “Service to Service” call is blocked by default to prevent lateral movement.

- Cross-project communication is strictly handled via Private Service Connect (PSC), treating every internal project as its own micro-perimeter.

- Workload Identity maps K8s service accounts to IAM roles, eliminating the need for internal passwords or keys.

Data-at-Rest Protection via CMEK and Cryptographic Isolation

We are adopting a Customer-Managed Encryption Key (CMEK) strategy across all zones. We will not rely on default provider-managed keys for any sensitive data. Instead, we will maintain full ownership and lifecycle control of the cryptographic keys used to protect our data at rest.

Key Drivers:

All keys will be stored in Cloud HSM (Hardware Security Modules). We will use FIPS 140-3 Level 3 validated hardware to ensure that keys cannot be exported or tampered with, meeting FedRAMP High baseline requirements (NIST SC-12 / SC-13).

We will enable CMEK for every supporting service, including:

- Cloud SQL: For all relational database instances.

- Cloud Storage (GCS): For all object storage and backups.

- Artifact Registry: For all container images and build artifacts.

- Persistent Disks: For all GKE node and workload volumes.

We will enforce a 365-day automated rotation policy for all primary encryption keys. Historical key versions will be maintained to ensure that archived backups remain decryptable (NIST SC-12).

We will grant the

cloudkms.cryptoKeyEncrypterDecrypterrole only to the specific Service Accounts that require it. No human user (U.S. or Global) will have permanent “Decrypt” permissions in the Production environment.

Centralized Secret Management

We are standardizing on Google Secret Manager for all sensitive credentials, API keys, and certificates (NIST IA-5 / SC-28).

- No secrets shall be stored in source code, configuration files, or environment variables. All secrets must be referenced as pointers to Secret Manager.

- Secrets will be injected into GKE pods at runtime using Secret Manager’s CSI Driver. This ensures that secrets are mounted as ephemeral volumes in memory, never persisting on disk within the workload project.

- All secrets stored in Secret Manager will be encrypted using the Project-Specific KMS Keys defined in the Encryption section of this ADR.

- Every access to a secret will trigger an audit log event sent to centralized logging, capturing who accessed what secret and when.

Multi-Domain Authentication Strategy

We are adopting a tiered authentication model that separates administrative access, user-facing application logic, and internal service communication into distinct domains.

Domain 1: Engineer & Administrator Access (Human-to-Infrastructure)

- Trust Direction: External (Corporate) → Internal (Boundary)

- Implementation: Okta federated with Google Cloud Identity .

- Access to production environments processing US government contract data is restricted to US Persons as defined under ITAR/EAR. The global engineering team operates exclusively on commercial-designated workloads with no access to government-contract data or systems and authenticate via the corporate SSO (Okta) using MFA. This identity is mapped to a Google Cloud Identity account within the commercial boundary, where IAM roles are applied (NIST IA-2(1), AC-17 (Remote Access)).

Domain 2: Application Authentication (User-to-Application)

- Trust Direction: External (Public) → Application Layer

- Implementation: TBD (Currently evaluating a User IAM Service, potentially Keycloak deployed within GKE).

- This domain manages our end-customers. It remains logically separated from our infrastructure IAM to ensure that a compromise of the user database does not grant access to the underlying GCP resources. (NIST IA-2, SC-3).

Domain 3: GCP-Service Authentication

- Trust Direction: Internal (Zone-to-Zone)

- Implementation: GCP Workload Identity.

- We eliminate the use of static, long-lived service account keys. GKE workloads are assigned a Kubernetes Service Account (KSA) that is cryptographically bound to a Google Service Account (GSA) via an OIDC handshake (NIST AC-6, IA-5).

Domain 4: Service-to-Service (Workload-to-Workload)

- Implementation: Pod Identity (Workload Identity) + TBD Service Mesh.

- Logic: Workload Identity will provide the “Source of Truth” for pod identity. A Service Mesh (to be determined) can leverage this identity to provide automated mTLS and service-level RBAC.

- Note: See Open Item

Secure Public Ingress

We will utilize the GCP-Native Edge Stack (Cloud DNS, Cloud Armor, Certificate Manager, and Cloud CDN) for the Secure Sandbox. The decision was driven by nature of the data, which must satisfy FedRAMP Moderate/High and ITAR requirements. A critical component of these frameworks is the protection of “Technical Data,” which includes not only payload data but also the network metadata (IP patterns, system fingerprints, and security logs) that reveals the design or operational capacity of the system. Cloudflare cannot guarantee U.S.-only support to meet that requirement.

Full ADR: <../adr-0140-secure-public-ingress-5005443077/index.md>

Open Items for Future Decisions

SIEM (Security Information and Event Management) Integration

- Status: Pending Product Evaluation and recommendation from advisory firm.

- Context: While we will establish a Centralized Logging Project to aggregate all audit logs (Admin Activity, System Event, and Data Access), we have not yet selected the SIEM platform that will ingest and analyze this data nor whether or not it will be managed by an External SOC.

- Constraint: The selected tool must be FedRAMP High Authorized (either as a SaaS or a self-hosted instance within our authorized boundary).

- Current Path: We are currently utilizing Google Cloud Logging and Log Analytics for baseline monitoring. We are considering candidates such as Security Command Center Enterprise, Google Chronicle Security Operations, Splunk Cloud (FedRAMP High), and Elastic Cloud (FedRAMP High).

- Impacted Controls: AU-6 (Audit Record Review, Analysis, and Reporting), SI-4 (Information System Monitoring).

Vulnerability Management & Continuous Monitoring

- Status: Pending Tool Finalization and recommendation from advisory firm.

- Context: We need a centralized dashboard to track and remediate vulnerabilities across container images, OS packages, and infrastructure-as-code.

- Current Path: Utilizing GCP Security Command Center (SCC) and Artifact Registry Scanning for the Sandbox. A decision is needed on whether to supplement these with a third-party authorized scanner or continue with hardened open source scanners.

- Impacted Controls: RA-5 (Vulnerability Monitoring and Scanning).

Disaster Recovery (DR) Strategy

- Status: Pending Recovery Time Objective (RTO) / Recovery Point Objective (RPO) definitions.

- Context: While our multi-zone GKE and Cloud SQL setup provide High Availability (HA), a formal Cross-Region Disaster Recovery plan is required.

- Constraint: DR data replication must stay within the boundary (U.S. Only).

- Impacted Controls: CP-6 (Alternate Storage Site), CP-7 (Alternate Processing Site).

Service-to-Service Authentication & Service Mesh Selection

Status: Pending Product Evaluation & Cost-Benefit Analysis.

Context: While Workload Identity provides the foundation for pod identity, a supplemental layer is required to enforce mutual TLS (mTLS) and identity-based authorization for service-to-service communication (NIST SC-8).

Constraints: The selected mesh must support FedRAMP High requirements for FIPS-validated cryptography and must be maintainable by the existing platform engineering team.

Candidates Under Evaluation:

- Anthos Service Mesh (ASM): Managed Istio; provides a “Google-native” experience with tight integration into the Assured Workloads boundary.

- Linkerd: Light-weight and performance-oriented, but requires a commercial license (Buoyant) for enterprise/compliance features and long-term support.

- Istio (Self-Managed): High feature parity but introduces significant operational “toil” and maintenance overhead.

Impacted Controls: SC-8 (Transmission Confidentiality), AC-4 (Information Flow Enforcement), IA-2 (Identification and Authentication).

Data Classification & Labeling Schema

Status: Pending ADR and Governance Review.

Context: While we are building to a FedRAMP High baseline, we must formally define the categories of data that will reside. This classification dictates the specific handling, retention, and encryption requirements for each data object.

Categories under evaluation:

- Public: Unrestricted data (e.g., public drone flight paths).

- Internal/Proprietary: Sensitive business data (e.g., internal telemetry, operational logs).

- PII/PHI: User-identifiable information requiring strict AC-3/MP-level controls.

- CUI (Controlled Unclassified Information): Data subject to specific federal safeguarding (e.g., flight sensitive FAA data).

Next Steps: We need to map our data types to these categories and decide on an automated labeling strategy (e.g., using Cloud DLP or GCS Metadata) to enforce policy at the storage layer.

Impacted Controls: RA-2 (Security Categorization), MP-3 (Media Marking), SC-28 (Protection of Information at Rest).

Consequences

When building for a NIST 800-53 High environment, security and compliance are the primary drivers, which naturally introduces certain operational “frictions.”

Positive Consequences

- By using approved in-scope services, we “inherit” the majority of low-level security controls from Google. This significantly reduces the volume of evidence we must manually produce for an audit.

- The use of “Known Good” blueprints minimizes the risk of fundamental design flaws that could cause an audit failure.

- Deploying in the Secure Sandbox ensures that the probability of failure in the Restricted Production Zone is near zero.

Neutral/Negative Consequences

- Developers can no longer “SSH” into a production node or make manual “hotfixes” in the console. All changes must originate in Git and pass through the full Cloud Build pipeline.

- The global team must adapt to the “Curated Build Library.” They cannot pull arbitrary GitHub Actions or public Docker images; they must work within the vetted artifact registry..

- Working within a “Dark VPC” with VPC Service Controls requires a deeper understanding of GCP networking. Engineers may encounter “Permission Denied” errors that are actually VPC-SC perimeter violations, requiring specific troubleshooting skills.

- Engineers may be required to refactor existing codebases to utilize “Approved” managed services. This may temporarily impact development velocity as teams adapt to the restricted service catalog.

- Any requirement for a “non-authorized” service will require a risk-based review. This process involves documenting the business necessity, assessing the security risk, and potentially implementing “compensating controls” (such as additional encryption or isolation), which increases the timeline for architectural approval.

Alternatives Considered

1. Self-Hosted Kubernetes (GKE On-Prem/Manual)

- Why it was rejected: While it offers maximum control, the burden of satisfying NIST CM (Configuration Management) and SI (System Integrity) for the underlying OS and Kubernetes control plane would require a significantly larger U.S.-based DevOps team.

2. Separate “GovCloud” Style Physical Isolation

- Why it was rejected: Managing a completely separate cloud environment (like those offered by other providers) creates “Innovation Lag.” Features often take 6–18 months to reach physically isolated government clouds.

3. Terraform Cloud / GitHub Actions Managed Runners

- Why it was rejected: These services do not currently hold the necessary FedRAMP High P-ATO. Utilizing them would create a “Compliance Gap” in our chain of custody.

4. Alternative: Single Project Architecture (Standard VPC)

- Why it was rejected: A single project fails the NIST AC-5 (Separation of Duties) requirement. If a developer has “Editor” access to the project to fix an app bug, they also have access to the logs and the KMS keys.

Formal Impact

This will impact the ATOMx civil airspace management system when moved to the Secure Sandbox.

Compliance Traceability Matrix (NIST 800-53 High)

| Technical Decision | NIST Control ID | Control Description | Compliance Rationale |

| VPC-SC and strict Organization Policies | AC-4 / SC-7 | Information Flow & Boundary Protection | The Fence: Ensures data stays in the U.S., prevents data from being copied to unauthorized external locations, and only authorized personnel can manage the infrastructure. |

| Project Partitioning | AC-5 | Separation of Duties | No Single Key: Prevents one person from having access to both the data and the logs, or both the app and the encryption keys. |

| Cloudflare/Cloud Armor WAF | SC-5 / IA-3 | DoS Protection & Device ID | The Shield: Scrubs internet traffic for attacks and uses mTLS to ensure only recognized “Push Providers” can connect. |

| Cloud NAT | SC-7 / AC-4 | Boundary Protection | One-Way Valve: Allows internal services to communicate externally without ever exposing them to unsolicited inbound connections. |

| CMEK (Cloud HSM) | SC-12 / SC-28 | Crypto Management & Data at Rest | Customer Ownership: Ensures the data is encrypted using keys that we own and manage on hardware that meets FedRamp High standards. |

| GKE Sandbox | SC-39 | Process Isolation | The Container Guard: Uses gVisor to provide a hardened kernel boundary between the application and the underlying host. |

| Binary Authorization | SI-7 | Software & Information Integrity | The Bouncer: Only allows code that has been scanned and “signed” by our trusted build pipeline to run. |

| Workload Identity | AC-6 | Least Privilege | Just-in-Time Identity: Gives applications exactly the permissions they need for the time they need them, with no static passwords. |

| Secret Manager | IA-5 / SC-28 | Authenticator Management | The Safe: Securely stores and rotates passwords and API keys so they never appear in plain text in the code. |

| Logging Project | AU-9 | Audit Integrity | The Black Box: Creates a tamper-proof record of every action that administrators cannot delete or modify. |

| Workload Identity & Scoped SAs | AC-2 / SC-12 / AU-12 | Account Management & Crypto Keys | The Identity Guard: Maps identities to specific tasks; ensures every Service Account (SA) has a unique purpose and a monitored key lifecycle. |

| SA-Based Project Access | AC-5 / AU-9 | Separation of Duties & Audit | No God Accounts: Ensures functional isolation; for example, SAs that write data cannot delete the logs that record those writes. |

| Automated SA Creation via Terraform | CM-3 | Configuration Change Control | The Paper Trail: Prevents manual “shadow” accounts; all identities must be defined in code, reviewed, and approved via the PR process. |

| Binary Authorization & Google Cloud Build | SI-7 / CM-5 | Software Integrity / Access | The Verified Build: Only code that passes CI/CD security scans can be deployed; prevents unauthorized or manual code execution in the sandbox. |

| Unified Deployment Strategy | CM (General) | Configuration Management | State Control: Ensures the Sandbox architecture remains a valid mirror of higher-impact zones through shared Infrastructure as Code. |

| Centralized DevOps Project | CM-2 / AC-6 | Baseline & Least Privilege | The Factory: Isolates build tools from the running app; developers can manage pipelines without having direct access to the application’s runtime environment. |

| Auto-Patching & Vulnerability Scanning | SI-2 / AU-12 | Flaw Remediation & Audit | Self-Healing: Uses GKE Autopilot to manage security patches and Artifact Registry to automatically scan for vulnerabilities. |

| Opaque Resource Naming | SC-38 | Information Hiding | The Mask: Uses non-descriptive naming conventions to hide the system’s internal purpose and architecture from potential discovery. |

| Okta to Cloud Identity Federation | IA-2 / AC-17 | Identification & Remote Access | The Personnel Gate: Ensures only vetted engineers with MFA can access the management plane from the corporate network. |

| User IAM Service (Keycloak TBD) | IA-2 / SC-3 | User Identification & Isolation | The Customer Gate: Isolates end-user credentials from infrastructure-level credentials, preventing lateral escalation. |

| GCP Workload Identity | IA-5 / AC-6 | Authenticator Management & Least Privilege | The Service Handshake: Removes static passwords for apps; services prove who they are using short-lived tokens. |