Segmenting Flight Intents

Originally

ADR-0109-Segmenting-Flight-Intents (v8) · Source on Confluence ↗Segmenting Flight Intents

Jira ticket - UNCREW-2444

Context

InterUSS and Operational Intents

For the purpose of achieving strategic deconfliction UAV traffic participants declare/reserve airspace for their upcoming flights by communicating/submitting Operational Intents to InterUSS/DSS. The current standard requires that each such Intent is expressed in terms overlapping 4D volumes:

{"extents":[{

"volume":{

"outline_polygon":{"vertices":[]},

"altitude_lower":{"value":-6,"reference":"W84","units":"M"},

"altitude_upper":{"value":110,"reference":"W84","units":"M"}},

"time_start":{"value":"2023-03-08T18:01:38Z","format":"RFC3339"},

"time_end":{"value":"2023-03-08T19:01:38Z","format":"RFC3339"}}],"key":[],"uss_base_url":"...","state":"Accepted","subscription_id":null,"new_subscription":

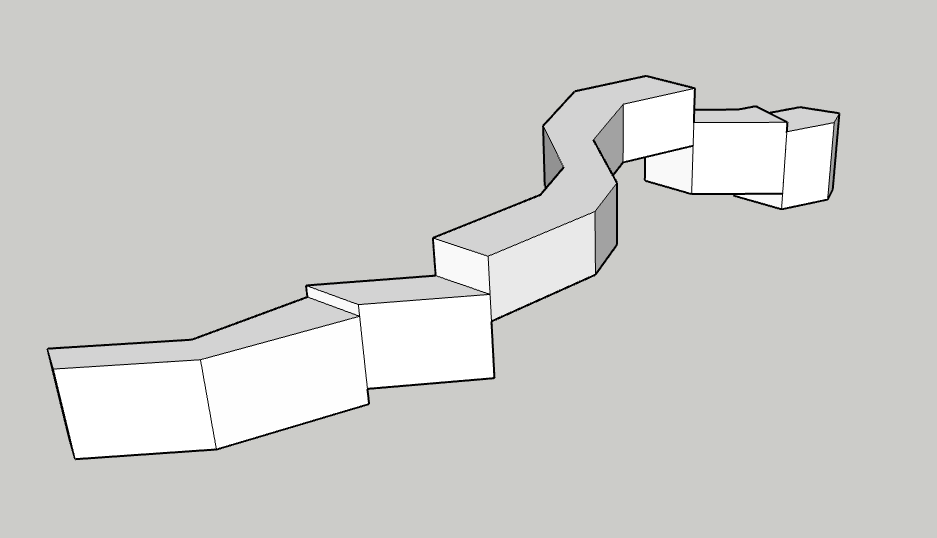

...That way Operational Intents can (spatially) end up looking like this:

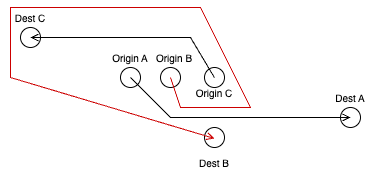

I.e.: it reserves everything between the ground and its maximum altitude, the consequence of which is that Uncrew operations - since they want to reserve all the air below them - cannot cross and force B into:

This means that Uncrew has to segment its Operation Intents into multiple volumes. The relevant fragment of the (non-free) standard states this on segmenting:

3.2.32 operational intent, n—a volume-based representation of the intent for a UAS operation; comprises one or more overlapping or contiguous 4D volumes, where the start time for each volume is the earliest entry time, and the stop time for each volume is the latest exit time.

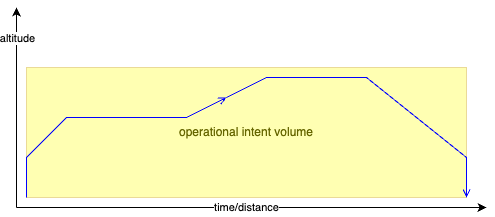

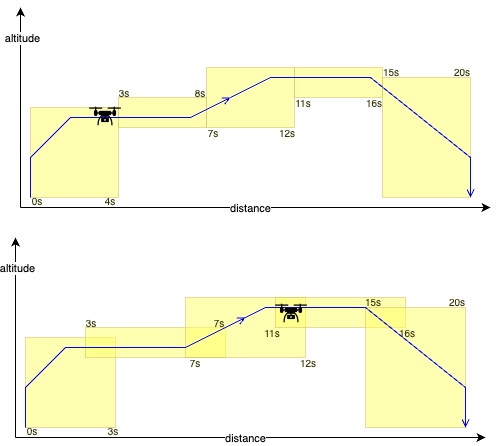

Uncrew could then do the following (please extend your imagination to the horizontal axis presenting both time and distance - drawing 4D concepts on paper is difficult):

Owing to the uncertainty, with which we can estimate/predict the UAV’s position in time, the volumes must overlap (spatially, temporarily or both) and they can overlap in whichever way that allows the submitter guide the UAV through them:

Prediction/Estimation

As difficult as it sounds, in order to segment its Operational Volumes, Uncrew has to be able to predict, with some practical accuracy, how far, along the mission, will the UAV be at a given time.

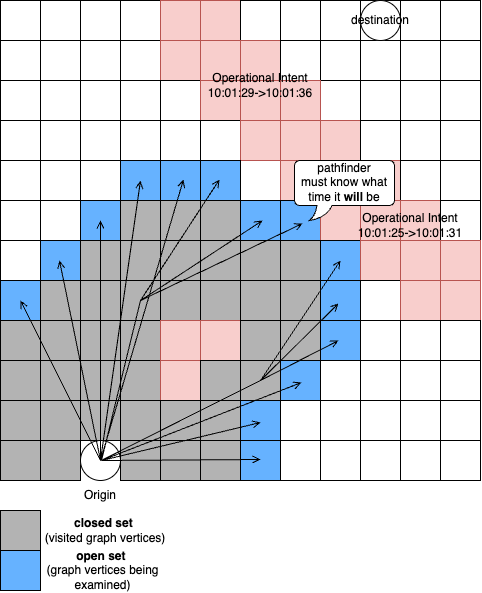

An important thing to note is that ultimately, the prediction is heavily interleaved into the process of planning. It has to be quick and robust enough to allow executing it with every iteration of the pathfinder:

When examing a collision with a 4D volume, it has to know what time it will be at any given open-set member (every iteration) and thus has to continously calculate time from origin similarly as it calculates distance and cost from origin.

This is architecturally significant as it indicates that the pathfinder will ultimatly produce 4D flight paths.

Meanwhile Auterion helps us solve this problem by extracting the estimation algorithm from AMC and either putting it on the vehicle or by giving us the code.

Numbers

Let’s look at some numbers then. Lets consider an operationally representative mission that takes off to 50m and then flies 5km out.

With these UAV params (taken from our warehouse)

UAVConfig = {

"vertical": {

"up": {

"max_speed_mps": 1.8,

"acceleration_mpss": 1

},

"down": {

"max_speed_mps": 0.7,

"acceleration_mpss": 2

}

},

"horizontal": {

"max_speed_mps": 16,

"acceleration_mpss": 2

},

"acceptance_radius_mts": 5

}A basic kinematic simulation indicates that:

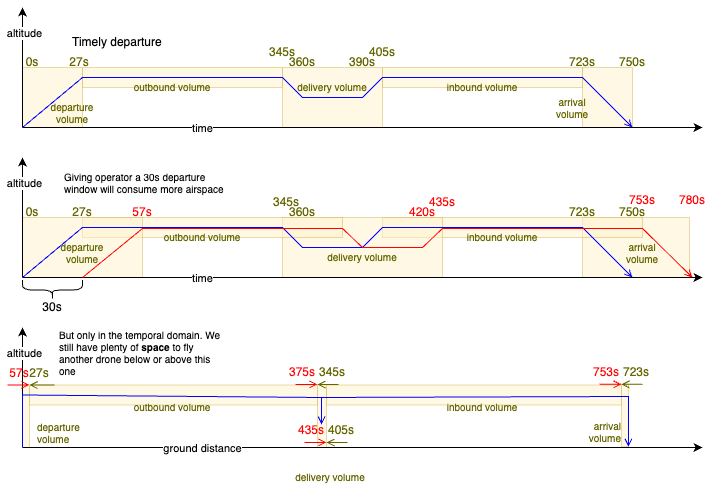

- it will take the UAV ~27s to climb (max climbing speed is 1.8 metres per second, acceleration 1mpss);

- ~345s (~5.3mins) into its flight it will reach its delivery point 5km out;

- it will further take it ~15s to descend to the delivery altitude yielding a 360s operation;

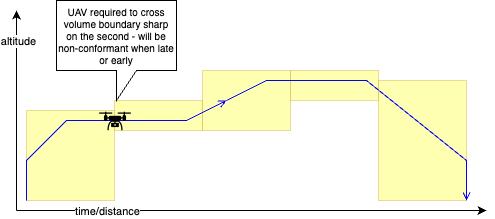

Here is an example segmentation of this operation:

It gives no reasons for why Uncrew Operational Intent volumes should overlap in the space, which allows them to cross and thus solves our problem. Landgrabbing airspace, if only in its temporal domain, still wastes it and limits the amount of traffic we can squeeze in the air. We will have to look into it one day.

Decision

Enforce Timely Departure

We don’t have to control the time of departure in order to solve the problem of crossing paths, but we have to do it in order to comply with the Operational Intents we ourselves submit. There are two ways of doing this:

- Submit the operation 2mins before departure (that’s the time the supervisor has to review it and assign it to an operator) and surface it to the operator 10s before its fixed time of departure with a countdown the operator has to acknowledge.

- Resubmit the operation with new timestamps when the operator presses “start”. The resulting new 4D volumes however may now conflict with an operation someone has submitted since. That someone is unlikely a 3rd party, that someone is probably another Uncrew operation from the same airfield. We could prevent that by having the pathfinder increase the time separation to whatever is the average time between original submission and eventual flight.

Both strategies allow to control the time of depature; the former imposes on the UX, the later makes a less efficient use of airspace.

Pathfinder

We observed that the process of estimation needs to occur very close to the pathfinder, so we will put it into it.

Output

Let’s start with the output, i.e.: what information we need? This is a short example of what pathfinder produces today:

{

"path":[

[-76.18453, 36.84227, 59],

[-76.18428, 36.84258, 59]

],

"terrain":[4.0, 4.0],

"stats":{

"path":{

"groundDistanceMts":41.02607081523392,

"distanceMts":41.02607081523392,

"tookMs":226

},

"scene":{

"tookMs":6437,

"dimensionsMts":[1816.594022071099, 2270.2297523272096]

}

}

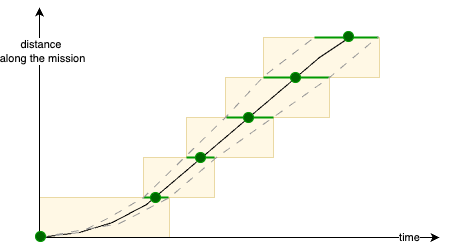

}We might have the pathfinder decorate the path’s waypoints with timestamps, but distances between successive waypoints can stretch kilometers and thus minutes. Those need breaking up before submission to UTM. Additionally, the progression of a UAV along a segment isn’t linear in time. It probably accelerates at the segment’s start and decelerates at the segment’s end. The acceleration itself won’t be constant (or linear?) owing to turn radius and non-infinite jerk. We will be better off asking the pathfinder to also return us a path chopped into segments already:

{

"max_edge_length_path": [

[-76.18453, 36.84227, 59, 1723570000],

[-76.18453, 36.84227, 109, 1723570030],

[-76.18481, 36.84225, 109, 1723570034],

[-76.18502, 36.84221, 109, 1723570040],

...

[-76.19231, 36.84219, 90, 1723570341],

[-76.19231, 36.84219, 40, 1723570421]

]

}The caller shall compose the Operational Intent volumes by adding the accuracy (error accumulating over distance) like so:

Input

On top of the start time, the pathfinder also receives:

...

"PathParameters": {

...

"time": {

"performance_envelope": {

"vertical_up": {

"max_speed_mps": {

"description": "Maximum climbing speed in meters per second",

"type": "number"

}

"acceleration_mpss": {

"description": "Average acceleration when climbing in meters per second squared",

"type": "number"

}

},

"vertical_down": {

"max_speed_mps": {

"description": "Maximum descending speed in meters per second",

"type": "number"

}

"acceleration_mpss": {

"description": "Average acceleration when descending in meters per second squared",

"type": "number"

}

},

"horizontal": {

"max_speed_mps": {

"description": "Maximum horizontal speed in meters per second",

"type": "number"

}

"acceleration_mpss": {

"description": "Average horizontal acceleration in meters per second squared",

"type": "number"

}

}

}

"acceptance_radius_mts": {

"description": "Carrot interface acceptance radius in meters. ",

"type": "number"

}

}

...Compliance Monitoring

Uncrew must monitor that UAVs fly within the 4D volumes assigned to them improve its estimation if they don’t. For that Uncrew has to:

- Post each Operatonal Intent successfully submitted to UTM to FlightLog (not just mission);

- Be alerted (via Honeycomb) of every non-compliant flight;

- If the flight wasn’t contingent, add the telemetry of this flight to the body of unit tests that have to pass the estimator;

Alternatives Considered

Accuracy of the Estimation

We decided to bestow the control over the accuracy of the estimation to the pathfinder’s caller. This is a tough one:

- If the estimation is executed purely on a kinematic model (something integrating speeds over time), then that something will just produce a number and the receiver must add the operational error to it;

- If the estimation is executed on top of some kind of a machine learnt model, then it itself comes with the observed error that it should put into the result.

We don’t have a machine-learnt model yet so we will have to do with the kinematic model for starters and add a generous, albeit not rigorously measured error to it. With time we will learn what this error is and can tighten it. We might also find time to build that machine-learnt model and be compelled to put it to the pathfinder. To facilitate for this, we could ask the pathfinder that instead of:

{

"max_edge_length_path": [

[-76.18453, 36.84227, 59, 1723570000],

[-76.18453, 36.84227, 109, 1723570030],

[-76.18481, 36.84225, 109, 1723570034],

[-76.18502, 36.84221, 109, 1723570040],

...

[-76.19231, 36.84219, 90, 1723570341],

[-76.19231, 36.84219, 40, 1723570421]

]

}it returns

{

"max_edge_length_path": [

[-76.18453, 36.84227, 59, [1723570000, 1723570000]],

[-76.18453, 36.84227, 109, [1723570030, 1723570031]],

[-76.18481, 36.84225, 109, [1723570034, 1723570036]],

[-76.18502, 36.84221, 109, [1723570040, 1723570043]],

...

[-76.19231, 36.84219, 90, [1723570341, 1723570511]],

[-76.19231, 36.84219, 40, [1723570421, 1723570625]]

]

}i.e.: [lng, lat, alt, [fastest_time, slowest_time]]

But then we have to remember that ultimately we’re not looking to guess where will PX4 take the UAV at some specific time, but to tell it to take it there then. This means that the pathfinder should just be producing a number and that we probably shouldn’t be building machine learnt time prediction models. When this happens max_edge_length_path disappears entirely and path itself becomes a more fine-grained prescription of the desired 4D location.

The only reason why we don’t produce a fine grained path from the onset is that, with a small acceptance radius, every such gratuitous waypoint will slow the UAV down unnecessarily.