Onboard Nodes

Originally

ADR-0095-OnBoard-Nodes (v4) · Source on Confluence ↗Uncrew Onboard Nodes

Context

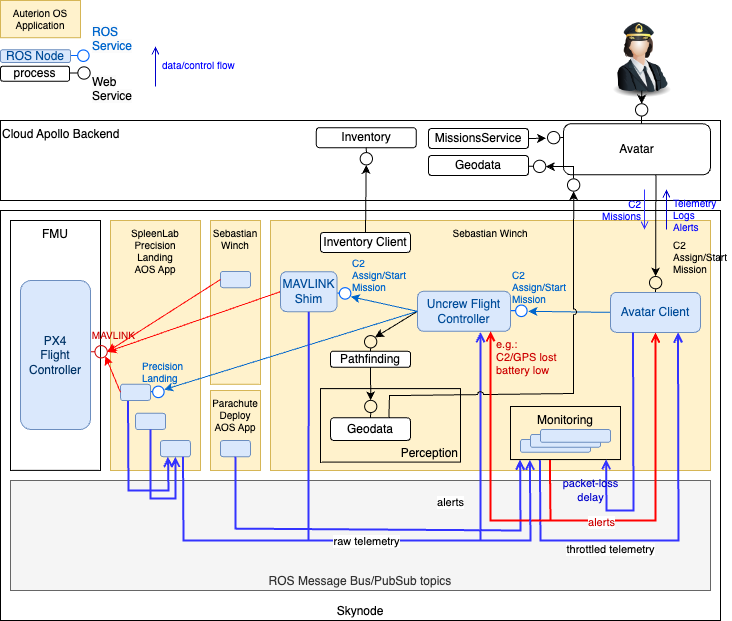

This ADR shares the context with its predecessor. There we decide that the on-board architecture of Uncrew will be distributed among multiple services (ROS2 Nodes), we decide Auterion OS will orchestrate them and that they will communicate using ROS2 primitives (services and pubsub). We did not decide what these services should be. This ADR is the first pass and split of the Uncrew onboard function into smaller services.

Decision

Drawing inspiration from cloud computing we chose to split the Uncrew on-board function finely into a singe-purpose micro-services. Some aspects of cloud computing (like horizontal scalability) don’t find parallels in embedded systems, but we believe that enough do to follow the style.

Inventory Client

Likely the least controversial piece of the puzzle is recognizing that the UAV can communicate with the Inventory service independently of much else and will need to do so before the Avatar even exists. This independent Inventory Client can deposit the mTLS certificates it negotiates either in a local file system or pass them on to whatever on-board system needs them with a ROS2 pubsub.

From that same ROS2 pubsub (the throttled telemetry topic) it can sift out the state it wishes to pass to the Inventory (e.g.: current location and battery charge).

The info on the airframe configuration, peripherals, software versions that we want the Inventory to communicate, it can fetch from the Auterion Suite.

Geodata

The Geodata service feeds map data/situational awareness to the Pathfinder. The on-board Pathfinder and Geodata service don’t need to differ from their cloud counterparts. The on-board Geodata service is just a leaf in the cascade/chain of the Geodata services with the origin somewhere with the UTM.

The important question is: should the Geodata service communicate with its upstream via the Avatar or directly? When speculating the Avatar, we said that “all communications with the UAV must pass the Avatar”. Did we really mean that?

| (in favour of) Directly | (in favour of) via Avatar |

|---|---|

| Geodata is just another kind of data that the UAV needs to exchange with Apollo and putting this new interface on the Avatar’s shoulders smells like a slippery slope towards it turning into a God object. | Every exchange between the upstream and downstream Geodata services needs to be mTLS authenticated (Avatar already does this, cloud Geodata would need to start doing what the Inventory does) and |

| It will also be an extra hop, point-of-failure and a resource drain. | Every exchange between the upstream and downstream Geodata services needs to be logged into the FlightLog - sure sometimes i can sourced from the ulog after the drone lands, but UAV and Avatar have unique perspectives (for instance with respect to timing) and these perspective may be invaluable when diagnosing failures. |

| Whenever the Geodata interface changes, so will need the Avatar’s. | Communicating via Avatar gives us a chance to apply some adaptation layer that helps handling communication when different versions of Geodata service interface will be used. |

| Every exchange between UAV and Apollo needs to be curated. It is by definition a closed, short whitelist and that list has to manifest somewhere (e.g.: as explicit interfaces the Avatar exposes). | |

| Every exchange between the upstream and downstream Geodata services needs to be prioritized, QoS-rationed and shaped both ways. The bandwidth the Avatar and UAV have at their disposal is a scarce resource, the usage of which needs to be controlled and so it is reasonable to demand that all traffic (Geodata specifically) passes the Avatar so that this control can be exercised. | |

| A proxy of some specific, IDL-described interface can be just code generated during the Avatar build process. |

The arguments we see indeed reaffirm that “all communications with the UAV must pass the Avatar”, but we must also admit that they start putting structural strain on the Avatar itself and we may need to rethink how much can the Avatar stick out of its “just a proxy” role. This however is out of scope of this ADR.

NOTE: that the Inventory Client talking (directly) with the Inventory service is one exception for the rule. The Avatar won’t even exist when the Inventory client on-boards the UAV with the Inventory service.

Uplink

The uplink at the UAV’s disposal is a scarce resource and (as already mentioned) we must curate (firewall), prioritize and ration access to it. There are several kinds of traffic that we may wish to put on this uplink. There will be some that we have missed (e.g.: NTP), but any other outgoing traffic we will probably need to drop.

We don’t yet know how will QoS exactly work, i.e.: how will an onboard application attach a specific QoS class to the traffic it emits. Examples:

- the process sending position telemetry to the Avatar should want to attach high-priority QoS class to this traffic.

- the process sending video should want to attach low-priority QoS class to the video frames.

This is one way they could do it:

We could even enforce it (configuring docker networks or like so) if we don’t trust or have no control over what NICs specific processes bind to.

The purpose of these deliberations is to note that access to uplink should be controlled/policed/shaped with an on-board (Skynode) OS IP networking infrastructure (NICs and routing tables) and not to speculate a dedicated forward proxy.

Avatar Client & Uncrew Flight Controller

The UAV exposes an interface to Apollo (specifically Avatar), with which the Apollo can navigate the UAV (tell to go some specific coordinates or stop and land) and receive telemetry in exchange.

Meanwhile PX4 exposes a ROS2 interface to accept C2 commands and emit telemetry and something has to do the translation.

Meanwhile still we think there needs to be a central entity and arbiter that decides what the drone should do next. There is - after all - just one driver in every car. This is the entity that will implement the control loop and, with some frequency, decide what the drone’s XYZ velocities should be so that it homes on the next mission waypoint. Doing all that will implement the Uncrew Mission Flight Mode (what we sometimes badly name as the PX4 Off-board Mode). Sure this entity can delegate the flying to some other on-board agent (like the SpleenLab precision landing), but it’s still in control because it can revoke the delegation at any time. Let’s call this process: Uncrew Flight Controller.

The question then emerges: Should it be the Uncrew Flight Controller that exposes the interface to the Avatar and translates to ROS2/PX4? There don’t seem to be good reasons for it:

- The Flight Controller should continue functioning even if the Avatar Client is not there or was never there (an Avatar Client-less autonomous vehicle).

- The Avatar Client interface Uncrew exposes can change (e.g.: away from GRPC) to something else, or there could be more than one of (different) Avatar Client interfaces that we wish to expose (think of the Visual Observer coming to help mid-flight and they are on the same subnet so they can dial the UAV).

This yields two processes/services/ROS2 Nodes:

- The standalone

Uncrew Flight ControllerUFC(cool abbrev.) exposing Avatar Client interface to: - The

Avatar Clientthat connects to the Avatar, forwards C2 from it to UFC and sources telemetry from ROS2 topics to forward it to the Avatar.

We don’t yet know ROS2 well enough on how to prevent nodes other than Avatar Client from sending C2 commands. A ROS application is a closed system and it appears nodes just trust other nodes to do the right think. Some people ask security related questions on forums so we will learn more as time goes.

It is worth noting that the UFC might one day break apart too - say to support PX4 and DJI. We argue it’s not something we should worry about at the architectural level (software design if at all).

Monitoring

Will be responsible for publishing:

- the alert stream/topic on any threshold crossed or alert-worthy event it’s been programmed and configured to raise;

- the throttled telemetry - the wire-worthy telemetry, i.e.: position telemetry filtered to significant movement. Significant is a client (UAV Avatar) -configurable. Remek thinks this stream may be an overkill. The Avatar Client has to translate ROS to gRPC. It has to understand every datatype anyway.It’s the only consumer of the throttled stream (what about the Inventory Client?). So it can do the throttling.

Stopgap

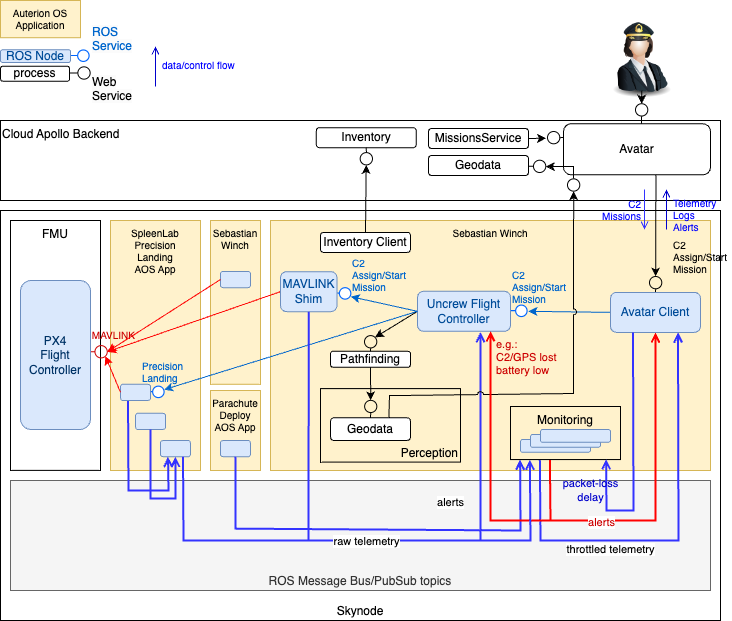

At the time of writing this, the ability to talk ROS with PX4 is approx 4 months away so if we need to, we have to implement the architecture proposed above in at least two steps. The following diagram proposes the first step:

MAVLINKShim needs to remain at the centre of this proposal as until we can switch to ROS2, we have to keep talking MAVLINK. PX4 has difficulty handling multiple MAVLINK clients (everyone receives the copy of the telemetry and everyone can command the drone) so that the force for:

- MAVLINKShim being the only Uncrew client of PX4

- MAVLINKShim posting telemetry to a ROS2 topic - and so immediate adoption of ROS2.