Apollo Flightlog Pubsub.howto

Originally

0089-Apollo-FlightLog_PUBSUB.HowTo (v15) · Source on Confluence ↗Flight Log on PubSub

Context

Google PubSub resources/entities have their meaning, role and limitations, so it is important to propose and scrutinise the manner, in which Uncrew will use it when propagating the Flight Log to the consumers.

Google PubSub is a scalable, asynchronous messaging service that decouples consumers from producers. It defines the following entities:

Topic - producers publish messages to named topics and consumers subscribe to these topics. A topic may be considered part of the infrastructure or may be dynamically created by the application as needed. PubSub will retire a topic after 31 days of inactivity. Clients need to present the

pubsub.topics.createGoogle IAM permission in order to create a topic. Clients need to presentpubsub.topics.publishpermissions in order to publish into a topic.- Retention - by default each message published to a topic is purged right after it is consumed by all Subscriptions attached to the topic. The topic however allows extending the retention of messages to some specific duration. Doing so opens the opportunity to replay previously consumed messages and doing so either from some past timestamp of previously created Snapshot (akin to a bookmark).

Subscription - akin to Kafka Consumer Group a subscription will receive each message on the topic at least once and it will load balance the messages across all subscribers attached to it. A subscription can have a filter against message attributes (not payload) and it will only see messages that match the filter. Those that don’t will be implicitly deemed consumed/acknowledged. Using a filter as a sub-topic may become expensive. One does not pay egress charges for filtered messages, but does pay for the subscription throughput charges. A client needs to present

pubsub.subscriptions.createpermission in order to create a subscription.Message - a message is an opaque block of bytes that the publishers publish and subscribers receive and consume. A subscriber must acknowledge the consumption of message. If it doesn’t, the message will be re-delivered to the subscription until it is acknowledged.

err = sub.Receive(ctx, func(_ context.Context, msg *pubsub.Message) {

fmt.Fprintf(w, "Got message: %q\n", string(msg.Data))

atomic.AddInt32(&received, 1)

msg.Ack()

})Target Volumes

It is very important to calculate the operational costs when considering usage of PubSub. For that we need to speculate representative volumes.

A basic 6-degrees-of-freedom telemetry sample weighs roughly:

types.v1.Position3D position = 1; // 8+8+8+1 = 25

types.v1.Pose pose = 2; // 8+8+8 = 24

google.protobuf.Timestamp time = 2; // 8+4 = 12

-----

61 byteswith 1Hz telemetry update cadence this produces 0.21MB per hour. Let’s multiply it 10x for a good measure (greater cadence and more telemetry types), yielding 2MB per hour and flight. 4630 Walmart stores with 100 drones each yields 2MB*100*4630=926GiB per hour and (24/7 delivery) 926GiB*24*30=666720GiB per month.

This yields 666.720*$40=$26K per month in the publish throughput charges.

It is worth noting that we are currently not serializing the data as efficiently as we are able to before publishing, so all the values will be somewhat higher than indicated here. If this becomes an issue, we can implement a more efficient serialization method.

Decision

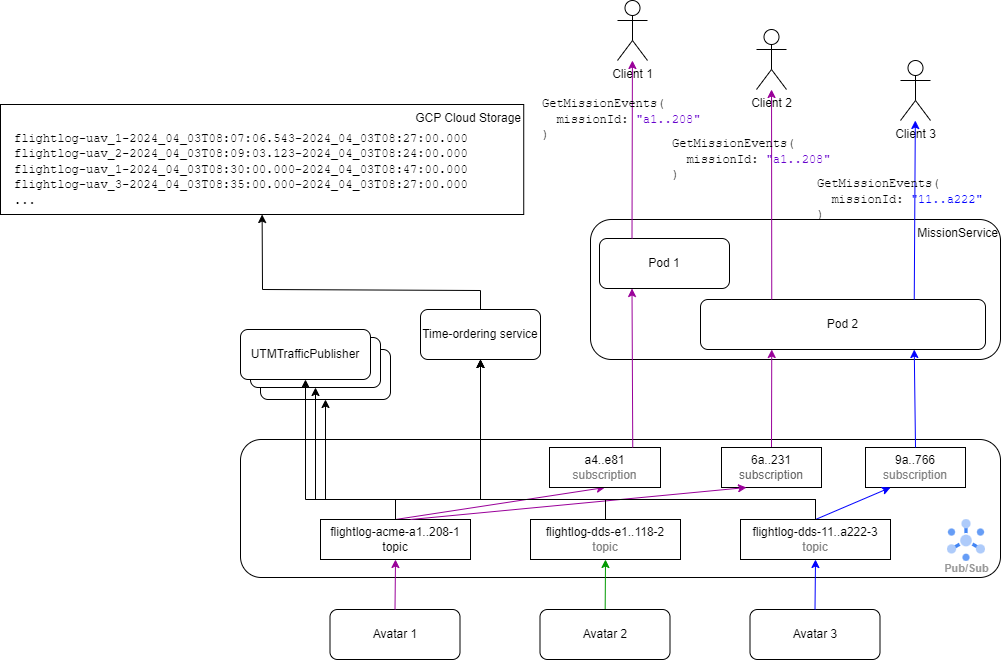

- On arm, each Avatar creates a per-mission topic and starts writing to it. Since one GCP Project can only host one PubSub instance, that instance may hold topics unrelated to FlightLogs and so the FlightLog topic shall be namespaced with a prefix. UAV IDs are assumed to be globally unique, so flightLog topic names thus shall have the form:

flightlog-<uav id>; - since flightlog topics are tied to uav id, a given topic will be used for the lifetime of the vehicle, and will not be deleted.

- Whenever a

MissionsServicepod receives a gRPCMissionRequestService/GetMissionEventsrequest for the FlightLog of a specific mission, they will know what UAV this mission is assigned to, so they will know what topic to subscribe to. - On start, Dataflow and

TrafficPublishershall query PubSub for available topics and subscribe to topics that they see fit (e.g. any topic matching the formflightlog-<uav id>).

Flight Log ID

When the UAV flies a mission, then the flight id can be derived simply from the mission id. However; the UTMTrafficPublisher will have to decorate the outgoing telemetry samples with the flight id that’s intelligible to the UTM system - at AirMap this was the AirMap-generated flight id. A mission that is committed to be flown has to be planned with a UTM system, so we may later decide to use that UTM flight id instead.

There could be occasions when the UAV flies w/o having a mission assigned. For some reason the pilot disarms the drone, tells it to takeoff and go somewhere. No matter how un-operational or illegal this is, Uncrew shouldn’t assume this won’t happen. When this happens, we will set the flight id simply to nil.

Storing Historical Logs

The MissionsService has the obligation to serve full Flight Log history for ongoing or past flights, so it has to have a storage. Google PubSub can be configured to retain acknowledged messages for $0.27 per GiB-month and up to 31 days. and $180K per month in storage costs.

The MissionsService doesn’t need the Flight Log data indexed with anything else but the Mission Id and time. Google Cloud Storage is 10x cheaper than PubSub. Since it’s impossible to (continuously) append to a GCS blob, it would be desirable to have the PubSub retain each Flight Log message until that message is written out to GCS. We can configure this GCS writing service to run at a given interval, so if that interval is 30mins then:

- Some messages appear in the storage with a 30mins delay.

- One will have to configure the topic’s message retention to more than 30mins in order to do the following:

Whenever the MissionsService receives a request for a FlightLog it doesn’t care whether the mission is ongoing or not. It creates a new PubSub subscription towards the UAV assigned to this mission exclusively for this request (shared subscriptions will load balance the messages). It picks the first message and:

if it’s the

armcommand (or any other pre-agreed mission-start marker), it knows that the entire Flight Log is still stored in the PubSub. It simply forwards all messages to the requestor. This will result in the requestor receiving historical telemetry in quick succession and the remaining telemetry as it happens.if it’s not the

armcommand, then this means that the leading telemetry messages have already expired and the MissionsService must reach to the Google Cloud Storage, fetch what’s in there that relates to the mission and reconcile it with what’s still in the subscription.- a sub-use-case here is that this is an old mission and there are no messages for it in the PubSub at all. They have all expired. To handle this, the MissionService could set a timer on its subscription. If no messages arrive within 300ms, it can start consulting the Google Cloud Storage already.

Message Format

Apollo’s native format is Protobuf and so is the format of the APIs the MissionsService expose to its clients. Therefore Apollo will benefit if the format of the messages written to PubSub and Google Cloud Store were Protobuf, more or less like so:

message Flight LogEvent {

enum OriginSystem {

UNKNOWN = 0;

UAV = 1;

PILOT = 2;

BACKEND = 3;

}

google.protobuf.Timestamp time = 1;

OriginSystem origin_system = 2;

string origin_service = 3; //e.g.: "MissionsService", "px4"

oneof {

types.v1.Position6DoF position_6dof = 1;

avatar.v1.ArmRequest armRequest = 2;

avatar.v1.GoToRequest gotoRequest = 3;

...

}

}However, we’re also writing the Flight Log to the Google Cloud Storage where the primary audience is data science and analytics. Protobuf is not a format of data science and analytics. Avro, parquet, csv or json are. Big Query can’t index a bucket written as Protobufs. Flight Logs on Google Cloud Storage must be consumable by data science and MissionsService. There are off-the-shelf libraries that exist to easily convert between json and virtually every other format, and so we will compromise by storing the logs as json, and putting the (relatively light) burden of translation on the consumers of the logs.

Message Ordering

The avatar is buffering and batching telemetry messages sent to the pubsub as to alleviate some of the throughput burden. A result of how we’re doing this is that the messages are not necessarily time-ordered. Consuming the logs chronologically is necessary to make any reasonable use of them, So we are creating an intermediary service between the storage location and the pubsub which will do the ordering before writing them to storage.

Consequences

Utilizing topic per flight will have us hit the 10K topic limit at around 3 thousand simultaneous flights. This assuming 3 subscriptions per active flight: MissionsService on behalf of HubOps, TrafficPublisher and Dataflow. Approaching this number will be good news, but it will have us reconsider our strategy.

Maybe we will learn how to use Dataflow better to partition from one all-flights topic. If we do, we will have the Avatar publish to two topics at once: all-flights topic and mission topic.

Maybe we will partition Uncrew to use multiple PubSub subscriptions or find a lever to negotiate the quotas with Google.

Maybe we shard flights into topics, so a topic will have a little more than one flight in it and the wastage will be controlled.

Though none of that goes around the 10K subscription limit and I don’t see what we can do about it - other than reconsider PubSub entirely.

Alternatives Considered

Topics - Shared vs per-Mission

There are two kins of consumers for the Flight Logs:

MissionsServiceinterested in one specific Mission at a time;- Dataflow or

TelemetryPublisherinterested in all Flight Logs;

As we’ve stated; 4630 Walmart stores with 100 drones each yield 666720GiB of data per month and 463000 simultaneous flights. This means that at any normal time there are 463000 PubSub subscriptions as HubOps are listening to the updates on each mission they are awaiting the completion on. If we chose to use a single all-flights topic, each subscription pays for 666720GiB of data in subscription throughput charges despite only needing 1/463000th it, wasting away (463000-1)*666.720*$40=$12347627731.2 ($12B) every month. This practically means that MissionsService must not drain from a topic that carries all the Flight Logs.

Meanwhile indiscriminate consumers such as Dataflow->Cloud Storage or TrafficPublisher won’t know what flights take place. Yes, they do care what flights take place as they have to partition by flight, but they won’t know what topics to subscribe to. Because they need to partition by flight and because PubSub round robins messages to all subscribers, the subscribers won’t be able to partition by flight if they drain one all-flights topic. This then suggests that indiscriminate consumers will also want to see per-flight topics.

Datastore instead of GCS

We could write the historical telemetry to a database (e.g.: Google Datastore) instead of GCS. We won’t need its complex indexing or data typing, but it will stop us from having to coordinate the Dataflow time partitioning with the PubSub retention, which is a worrying dependency. In fact, we won’t even need to configure any retention on the PubSub. However, we would still need to reconcile the historical data with the PubSub stream and doing all this would be ~5x more expensive than GCS. We also know that GCS copes really well with ever-growing data and we don’t know how Datastore compares as the amount of data reaches Big Data volumes.

Additionally the pricing for Datastore is

$0.06 per 100,000 entities and

$0.18 per 100,000 entities

Running conservatively with 1Hz telemetry rate per flight. 100 drones and 4630 Walmart stores yield100*4630*60*60=1666800000 entities and 0.21*100*4630=97Gb per hour

So in order to read the last hour or flights with Datastore, we need to write it first:16668000000.18/100000=$3000.24

and then each read16668000000.06/100000=$1000.08

In order to re-read the last hour of logs (97Gb) from PubSub we need to pay 0.1*40=$4.