Pathfinder VOXEL SCENE Delegation

Originally

ADR--0032-PATHFINDER-VOXEL-SCENE-DELEGATION (v7) · Source on Confluence ↗Delegating Pathfinder Scene Rendering Upstream

Context

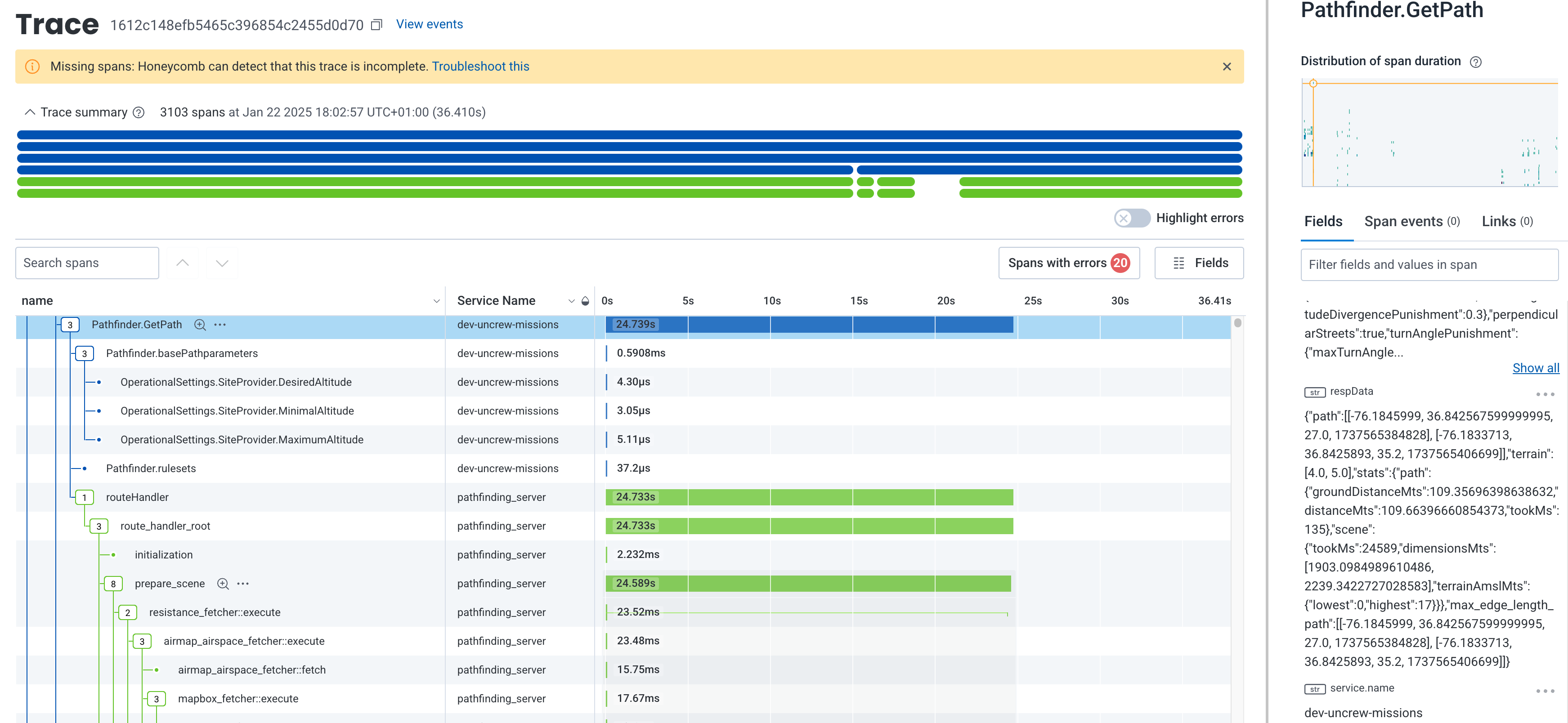

The problem we’re seeing is the long time it takes for the uncrew-pathfinder to find paths in complex scenes. In many cases, virtually all this time is spent preparing the scene (volatile source trace here):

Of which again, most of the time is taken by the process of rendering the many airspaces in scope of the scene into the 3D voxel occupancy array.

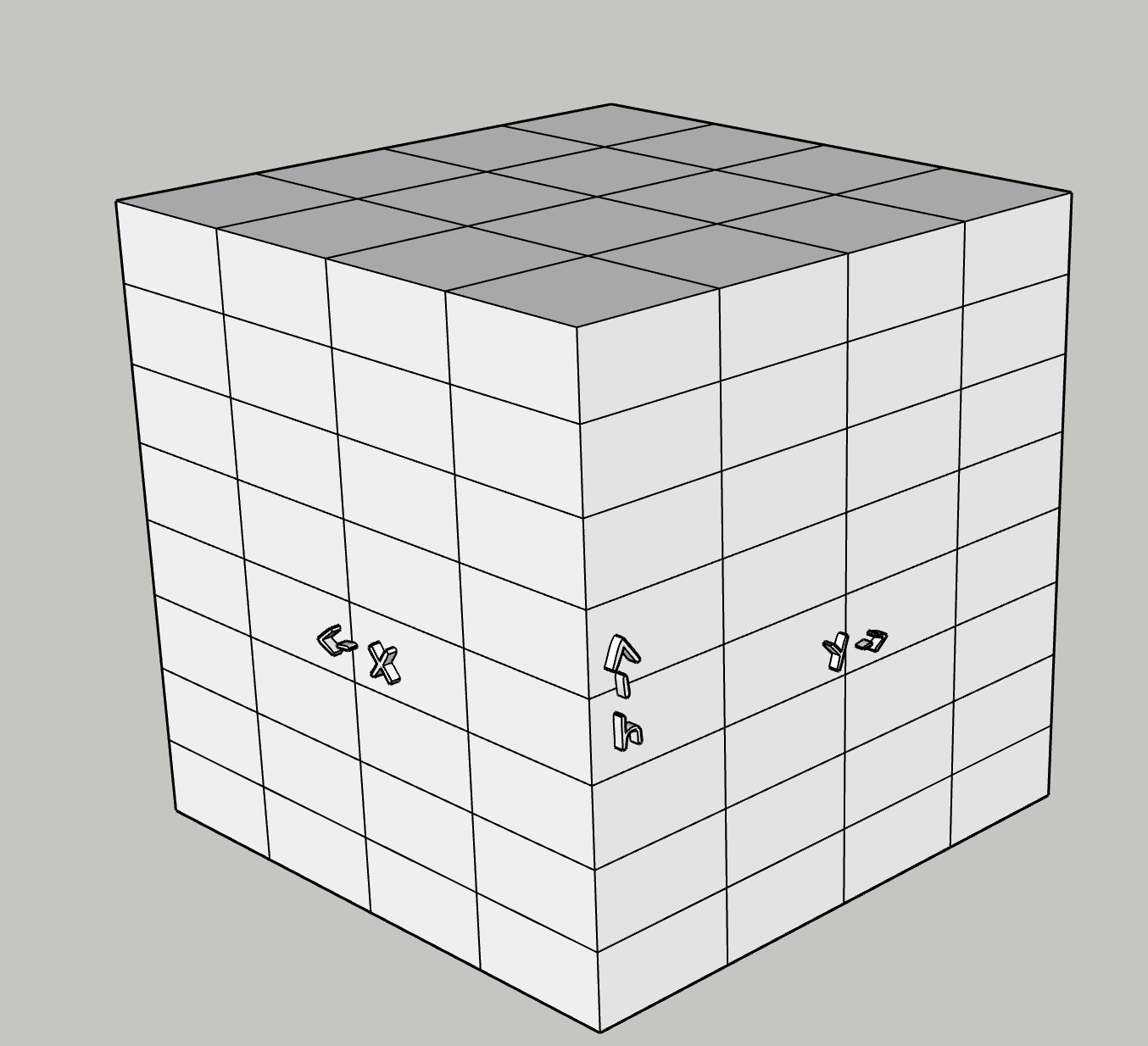

The rendering algorithm is expensive owing to how expensive it is to test (airspace) polygon intersection in a spherical coordinate system. It goes as follows:

For each airspace:

- Check that the bounding box wrapped around the airspace intersects with the scene (bounding box intersection is very cheap to calculate), if not, terminate, if yes:

- If bounding box’s area is smaller than “min parting area”, divide it in four and repeat (a) for each resulting bounding box; if bounding box is smaller than the “min parting area”:

- For each cell (2d version of voxel) within the bounding box, pad the cell by half and test if it intersects with the airspace, if it does

- Iterate the vertical column of voxels whose base matches the cell and is suspended between the airspace’s floor and ceiling and light each up. The airspace ceiling and floor may have mean-sea-level or above ground vertical reference and the latter case requires that the rendering algorithm has the digital terrain model handy. This means the pathfinder has to download terrain before it can start processing airspaces.

The journey of the scene data is today as follows:

.png)

The obvious temptation is to move the airspace rendering not when it’s needed in the pathfinder, but when it’s available in the uncrew-geodata-service. Naively; every time the geodata-service receives an updated tile, it could run the same airspace rendering algorithm the pathfinder does today and produce a 3D raster tile. It could then put that raster tile on the wire whenever pathfinder asks, and pathfinder could page it directly to its memory thus populating the scene.

This naive approach however has one important caveat. Airspaces, especially operational-intents, are time bounded, i.e.: airspaces are “active” only between some specific timestamps. The pathfinder deals with this today by only considering (rendering) airspaces that are active between “now” and some fixed max flight duration. The geodata-service cannot do that as its “now” (reception of an updated tile) is different than pathfinder’s “now” (reception of the “find path” request). The operational-intents can be chopped by time relatively finely so the time dimension has quite a bit of change in it. Either we fall back from 3D raster to 4D raster or the tile rendering process and resulting tile format is non-trivial.

Airspaces are always hard obstacles and so a single boolean value suffices to describe the cost of crossing it (infinite or zero). Assuming current voxel sizes (15m x 15m x 8m) a pathfinding scene spanning 1km x 1km x 64m consists of 35K voxels and thus needs 4kB of memory (uncompressed) to store such raster. Assuming 10min temporal resolution, an hour-worth of scene data for the same area will require 20kB of memory.

Spherical Geospatial Calculations in Golang

Golang’s answer to geospatial computing spatial-go/geoos doesn’t appear to do spherical coordinate system calculations (not beyond SphericalDistance). Why is support for spherical system important? This is the (shortest) line that connects Paris and Vancouver. It intersects Greenland only in the spherical coordinate system. In Cartesian, it will pass New England.

Rendering airspaces is nothing more than checking whether voxels intersect with airspace polygons. Doing this with spherical coordinate system maths is a lot more expensive than with Cartesian. Below timed 100K iterations, a moderately complex polygon and a voxel-like box:

#16 42.52 29: intersect() between two polygons in spherical coordiantes 621ms

#16 42.52 29: intersect() between two polygons in cartesian coordiantes 51ms

#16 42.52 29: intersect() between polygon and bounding box in cartesian coordiantes 6msThe effects of Earth’s curvature are greatly diminished at close distances and intersection results differ little between Cartesian and spherical coordinate systems. But they do differ. If there was a geofence for instance that coarsely wraps the continental US, a geofence with edges miles and miles long, then, using Cartesian intersections, might produce paths that wrongfully step out of the geofence. This error (dotted red line d on the map above) depends on the latitude and segment’s (or airspace edge) length:

Hyderabad over distance 0.1km

distance between cartesian centre and intersection 6.40864e-05m

Virginia Beach over distance 0.1km

distance between cartesian centre and intersection 0.000144344m

Maine over distance 0.1km

distance between cartesian centre and intersection 0.000185938m

Anchorage over distance 0.1km

distance between cartesian centre and intersection 0.000357498m

Hyderabad over distance 1km

distance between cartesian centre and intersection 0.00613376m

Virginia Beach over distance 1km

distance between cartesian centre and intersection 0.0146681m

Maine over distance 1km

distance between cartesian centre and intersection 0.0192485m

Anchorage over distance 1km

distance between cartesian centre and intersection 0.0358013m

Hyderabad over distance 10km

distance between cartesian centre and intersection 0.613397m

Virginia Beach over distance 10km

distance between cartesian centre and intersection 1.46684m

Maine over distance 10km

distance between cartesian centre and intersection 1.92485m

Anchorage over distance 10km

distance between cartesian centre and intersection 3.58009m

Hyderabad over distance 100km

distance between cartesian centre and intersection 61.3385m

Virginia Beach over distance 100km

distance between cartesian centre and intersection 146.672m

Maine over distance 100km

distance between cartesian centre and intersection 192.459m

Anchorage over distance 100km

distance between cartesian centre and intersection 357.843m

Hyderabad over distance 1000km

distance between cartesian centre and intersection 6122.58m

Virginia Beach over distance 1000km

distance between cartesian centre and intersection 14549.1m

Maine over distance 1000km

distance between cartesian centre and intersection 18987.6m

Anchorage over distance 1000km

distance between cartesian centre and intersection 34227.4m

Hyderabad over distance 10000km

distance between cartesian centre and intersection 543586m

Virginia Beach over distance 10000km

distance between cartesian centre and intersection 990554m

Maine over distance 10000km

distance between cartesian centre and intersection 1.07762e+06m

Anchorage over distance 10000km

distance between cartesian centre and intersection 1.00574e+06mThis means that choosing a tile zoom whose east-west extent doesn’t cross 10kms our errors will never be greater than 3.5m (in Anchorage) or 1.9m in Maine or 1.4m in Virginia Beach.

Decision

4D Tiles Rendering

Upon reception of an airspace tile, the geodata-service shall generate the corresponding 4D tile raster data using the 4D resolution it is configured to maintain. This resolution could be expressed as [0.5’’, 0.05’’, 8m, 10min] and be the geodata-service deploy-time configuration. Additionally, the geodata-service can be configured to maintain a number of different resolutions at the same time to allow pathfinder use varied sized voxels. It seems wise to propose that, like with mapbox tiles, the resolution is a function of the zoom. I.e.: each tile, irrespective of the zoom, has the same pixel resolution.

The geodata-service shall maintain (cache) the 4D data applicable to some configured duration into the future.

As we are at it, we might consider asking the geodata-service to also produce, maintain and serve binary terrain tiles.

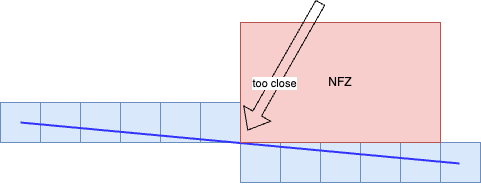

Padding

Geodata-service shall pad airspaces it renders with a configurable spatial and temporal padding independent of the voxel size. The padding however must be at least half of the voxel size.

4D Tile Schema

The pathfinder voxels map linearly to latitude and longitude, i.e.: the coordinate system they form is a trivial projection of wgs84. The rendering process needs to identify/paint the voxels each airspace intersects with. Just like DEM/DSM and unlike OSM/mapbox tiles, the 4D tiles shall use WorldCRS84Quad tile schema. The geodata-service shall serve the 4D tiles using 4d-raster/{z}/{x}/{y}/{h}/{t}where:

- z, x and y directly correspond to the zoom, x and y of WorldCRS84Quad.

- h is the heigh above sea level in some preconfigured increments (e.g.: 0 will hold data between 0 and 64 metres MSL, while 1 will hold data between 64 and 128m).

- t is the time in some preconfigured increments (e.g.: number of hours since the epoch) - and so if the pathfinder needs to fetch data for a flight that starts at 1:50 and its estimations indicate that it will end 2:10, it has to download two tiles for the same spatial region, one for the 1:00hour and one for the 2:00hour.

4D File Format

The data must be stored in a binary form and since:

- Pathfinder already depends on protobuf for mapbox vector tiles and open telemetry,

- Geodata-service already depends on protobuf as it speaks GRPC with UTM

It seems like protobuf is a good idea as the serialization method.

//we will iterate on this version when we refine this format possibly with Quad/Octa Trees

package rendered.v1;

message 4DTileResolution {

//longitudal

int32 x = 1;

//latitudal

int32 y = 2;

//vertical

int32 h = 3;

//temporal

int32 t = 4;

}

message 4DTile {

4DTileResolution resolution = 1;

//The 4D data is flattened into 1D array in this order: vertical/latitudal/longitudal/temporal

//i.e.: the first 8 bits in the range describe the voxel that's lowest altitude as well as

//south/western -most. The 2nd 8 bits describe the voxel next to the east.

//That way the caller may interpret the temporal dimension with time ranages it cares for.

bytes airspaces = 2;

}

Pathfinder Integration

Since the 4D tiles’ vertical coordinates start at sea level, the pathfinder will have to download terrain before it can work out what vertical slices of airspace data it needs to request. This may be avoided by allowing the pathfinder use AGL when requesting 4DTiles, but that complicates the exchange so we will wait and see if that optimization is necessary.

Pathfinder can populate the entire scene all at once as it does today leading to longer waiting times for terrain. However, populating the whole scene in advance is wasteful and pathfinder should instead load it lazily in quadrants as it iterates through path candidates. The quadrants should be aligned with the WorldCRS84Quad tiling.

Geodata-service Integration

We remarked that golang misses a geospatial library that supports spherical coordinate system calculations, but we also noted that Cartesian is way quicker and it looks like we can afford the resulting imprecision. Still, the pathfinder shouldn’t lose the ability to render airspaces, this function is ideally written once and it can be easily wrapped into a library that the golang service can cgo load and call create_tileocassionally without much more interaction.

Consequences

The obvious gain is that the scene initialization should fall drastically and be solely the function of the scene size, not the amount of airspaces in it.

The obvious cons is the tighter coupling between the pathfinder and the geodata-service, specifically:

- The pathfinder will only be at liberty to use voxel sizes that have been preconfigured at the geodata-service or forced to scale the incoming data which will cost CPU cycles;

- The pathfinder gets more complex by supporting varying airspace formats, or we drop the support for mapbox tiles leading to a massive rework of the integration tests;

Alternatives Considered

List all alternatives you’ve considered and explain briefly why did you reject it.

Standard 3D Tile Specification

Creating ones own file formats isn’t wise and it would be desirable to find something off-the-shelf. glTF is the first OGC standard specification for 3D tiles that Google produces. It carries pixels, vector geometries, textures, animations - everything a frontend needs to render the contents in front of a human. It doesn’t have the temporal dimension. glTF is deemed too complex for us, especially contrasted with the triviality of our counter-proposal 4D tiles.

Carry Terrain Data within 3DTiles

Instead of making two roundtrips: (1) terrain (2) airspace, the geodata-service could put the terrain data into the tiles. However, the 4DTiles stack vertically so the terrain data for stacking 4Dtiles will be redundant.

Use Parallel Computing and Cartesian Calculations

In recent developments we discovered that we can decimate the scene generation time by:

- parallelizing it (70%-90% improvement seen)

- using Cartesian maths (~90% improvement seen)

However, the former doesn’t scale to drone hardware. Meanwhile the idea to delegate the scene generation upstream has been floating for a long time now and not just because of performance. We wanted to remove cognitive load from the pathfinder and have it do just pathfinding without scaling, interpreting airspace properties, amsl vs agl airspace ceiling references and subtractive vs additive nature of some airspaces. For instance, LAANC/UASFM grid needs to be subtracted from the class airspace it lives in for the pathfinder to start navigating in this newly opened cavity.