Server Side Telemetry Processing Strategy

Originally

ADR-0129-Server-Side-Telemetry-Processing-Strategy (v9) · Source on Confluence ↗Server-Side Unit Conversion & Calculations

Decision Summary

We will move altitude graph telemetry preparation to server-side presentation endpoints and keep all mutation APIs strict SI.

This update adds mandatory plan-version segmentation, snapshot ordering, self-describing per-series unit metadata, and a normative downsampling strategy.

Context

The current frontend altitude graph path performs plan normalization, terrain alignment, drone history projection, and route merge locally.

Primary current paths:

src/queryProviders/useFlyGraphData/index.tssrc/queryProviders/useFlyGraphData/hooks/useFullGraphData.tssrc/queryProviders/useFlyGraphData/utils/getFullRouteData.ts

Unit conversion is currently frontend-owned via local preference:

src/config/units.tssrc/utils/altitudeConverter.tssrc/utils/distanceConverter.ts

Performance Baseline (Current State)

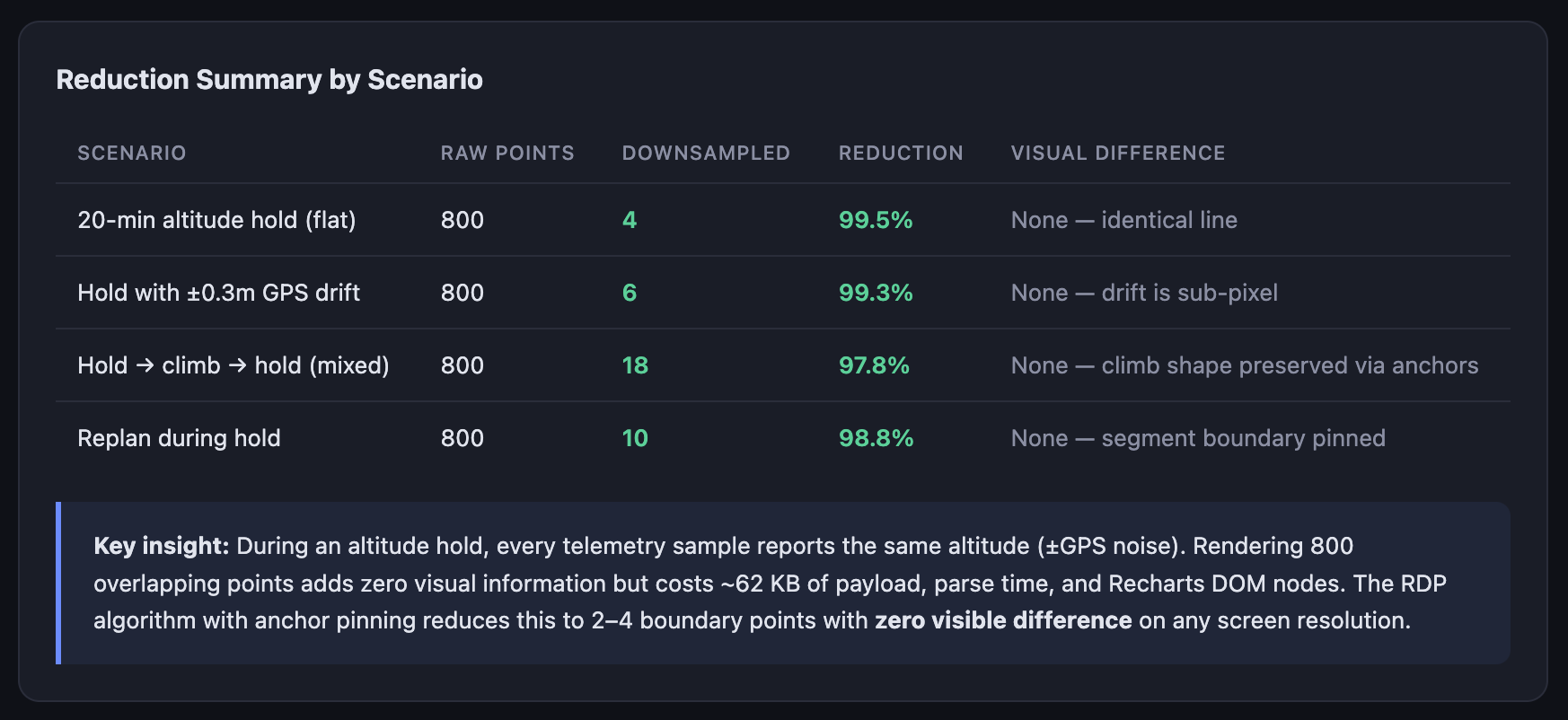

| Metric | Current Value | Target | Why? |

|---|---|---|---|

| Points per typical 20-min mission | ~3,200 | <960 (70%+ reduction) | TBD |

| Uncompressed FE payload (full graph) | ~450 KB | <180 KB | TBD |

| FE merge/transform time (p95) | ~120 ms | <10 ms (thin adapter only) | TBD |

Observed constraints:

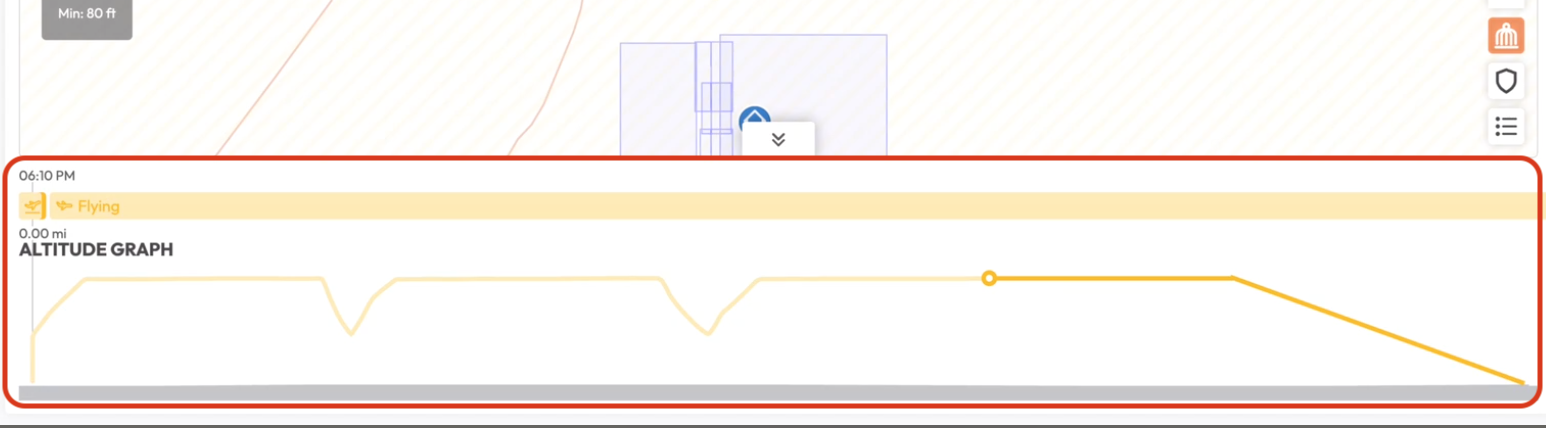

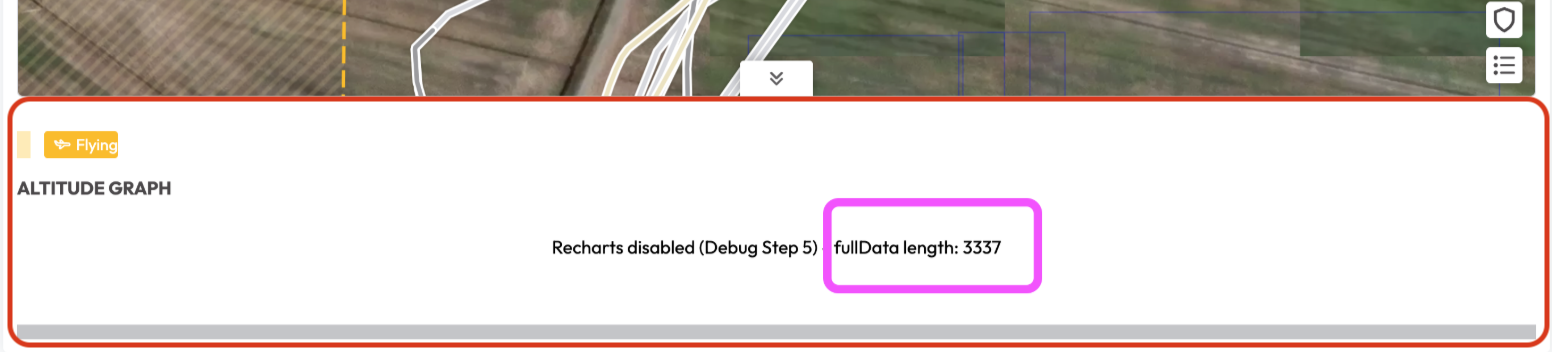

- Performance pressure from 3000+ telemetry points and frequent updates.

- Replanning during flight introduces ambiguity when FE matches by waypoint index only.

- Cross-client consistency risk when formulas and rounding are repeated on each platform.

- Flight-safety requirement that write paths remain unambiguous and canonical SI.

- The current altitude graph has no interactive zoom/brush flow; it renders the full extent directly in Recharts.

Decision Drivers (Ranked)

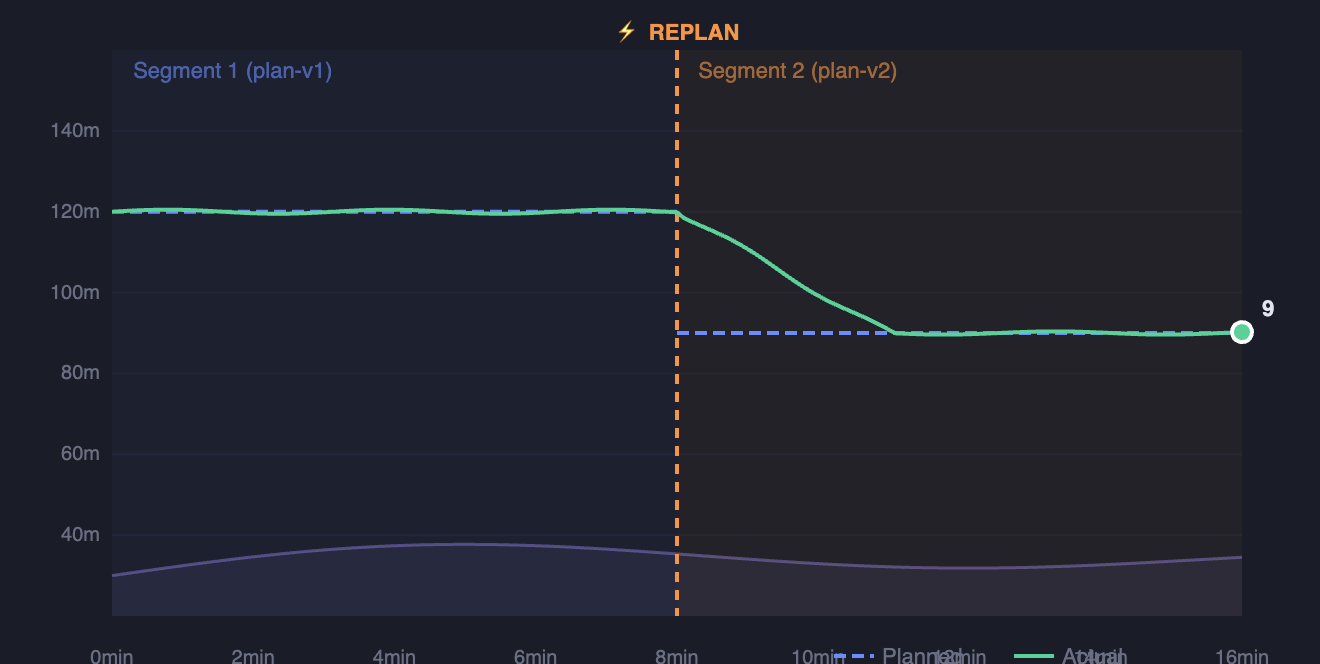

- Replan safety (blocking) — Replanning during flight causes index-remap errors that can mislead operators. This is the primary driver; without plan-version segmentation, the graph is unreliable during the most critical phase of flight.

- Cross-client consistency (high) — Three platforms(if mobile or desktop needs), duplicating conversion formulas leads to divergent rounding and unit bugs. Centralizing on the server eliminates this class of defect.

- Performance (high) — 3,200+ raw points per mission cause unnecessary FE computation and network overhead. Downsampling is required for acceptable render performance.

- SI write-path integrity (non-negotiable) — Mutation APIs must never accept ambiguous units. This is a safety invariant, not an optimization.

- Safe rollout (required) — Any change to the telemetry display path must be incrementally rollable with instant revert.

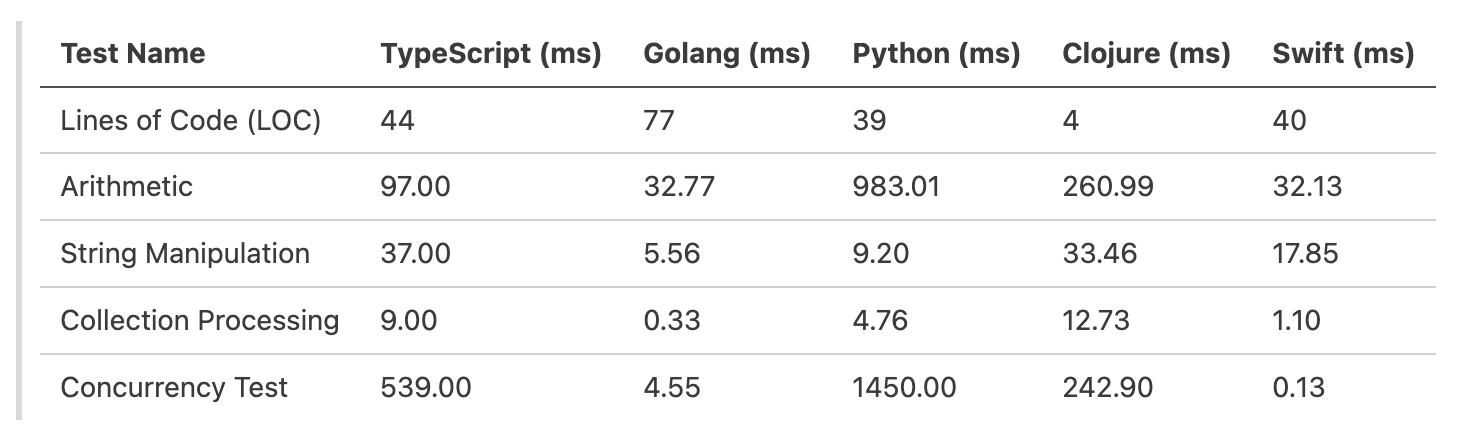

Local algorithm benchmarks indicate that Go outperforms TypeScript significantly in arithmetic and collection processing.

Specifically, for collection processing, Go is roughly ~27x faster (0.33ms vs 9.00ms). This suggests that heavy data manipulation belongs on the server.

However, moving all unit awareness to the server introduces risks regarding data integrity on write operations and tight coupling of UI concerns to domain APIs.

Problems To Solve

- Drastically reduce point count while preserving graph shape and critical events.

- Represent dynamic plan changes without index remap errors.

- Standardize unit conversion and rounding rules across clients.

- Preserve strict SI semantics for writes/commands.

- Provide safe rollout and deterministic fallback.

Data Flow: Current vs. Proposed

CURRENT (client-heavy):

┌────────┐ raw telemetry ┌──────────────────────────────────────┐ render

│ gRPC │ ──────────────────► │ FE: merge, align, convert, project │ ──────────► Recharts

│ Server │ (~3200 pts, SI) │ (useFlyGraphData pipeline) │ (~3200 pts)

└────────┘ └──────────────────────────────────────┘

PROPOSED (server-heavy):

┌──────────────────────────────────────────┐ graph-ready ┌──────────────┐ render

│ Server: segment, downsample, convert, │ ──────────────► │ FE: adapter │ ──────────► Recharts

│ annotate (presentation endpoint) │ (<960 pts) │ + render │ (<960 pts)

└──────────────────────────────────────────┘ └──────────────┘Decision

1) Read Path Becomes Server-Heavy

Introduce presentation/graph telemetry endpoints that return graph-ready, downsampled, optionally unit-converted series.

The frontend will focus on:

- request orchestration

- rendering

- loading/error states

- minimal adapter mapping

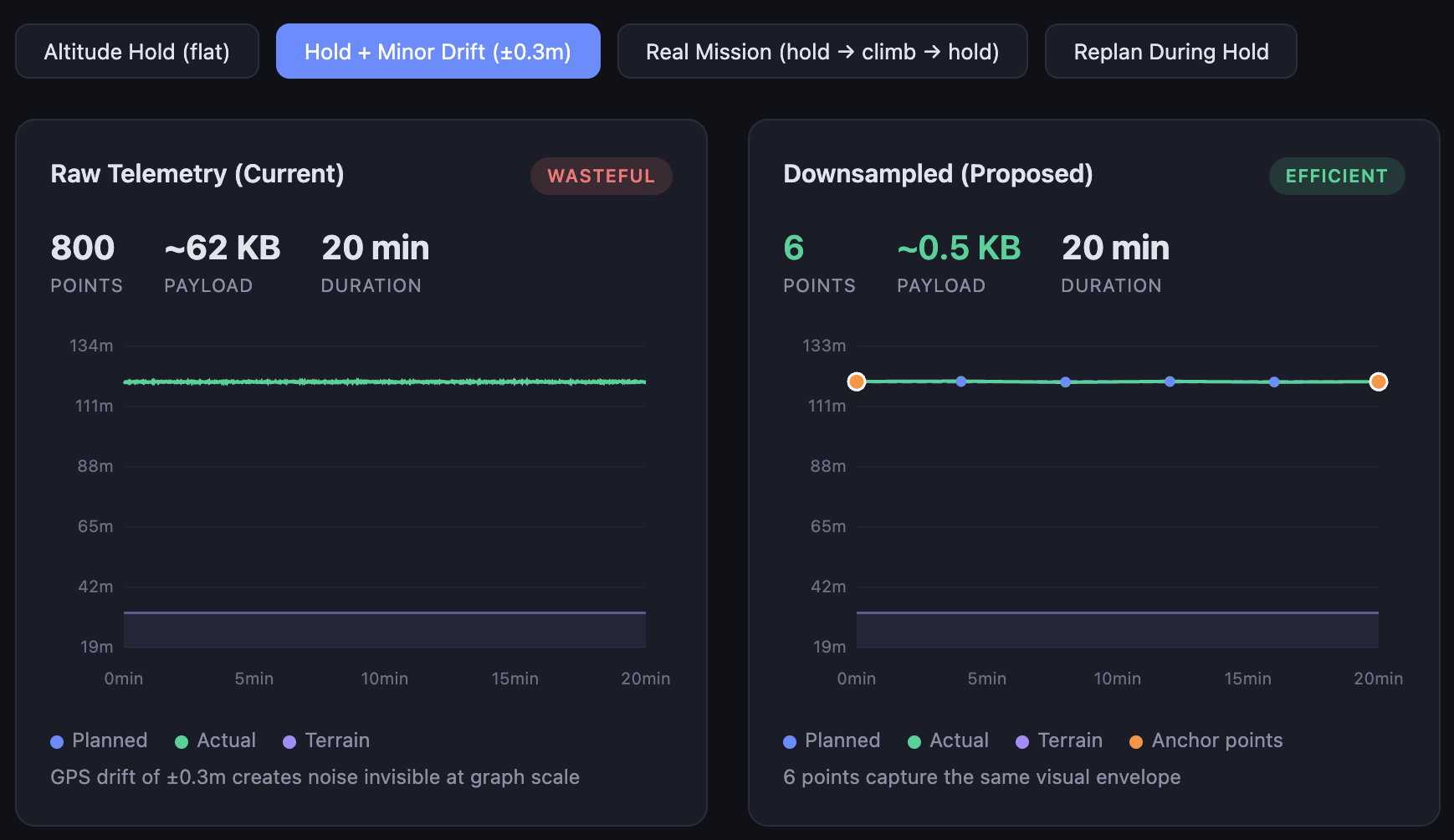

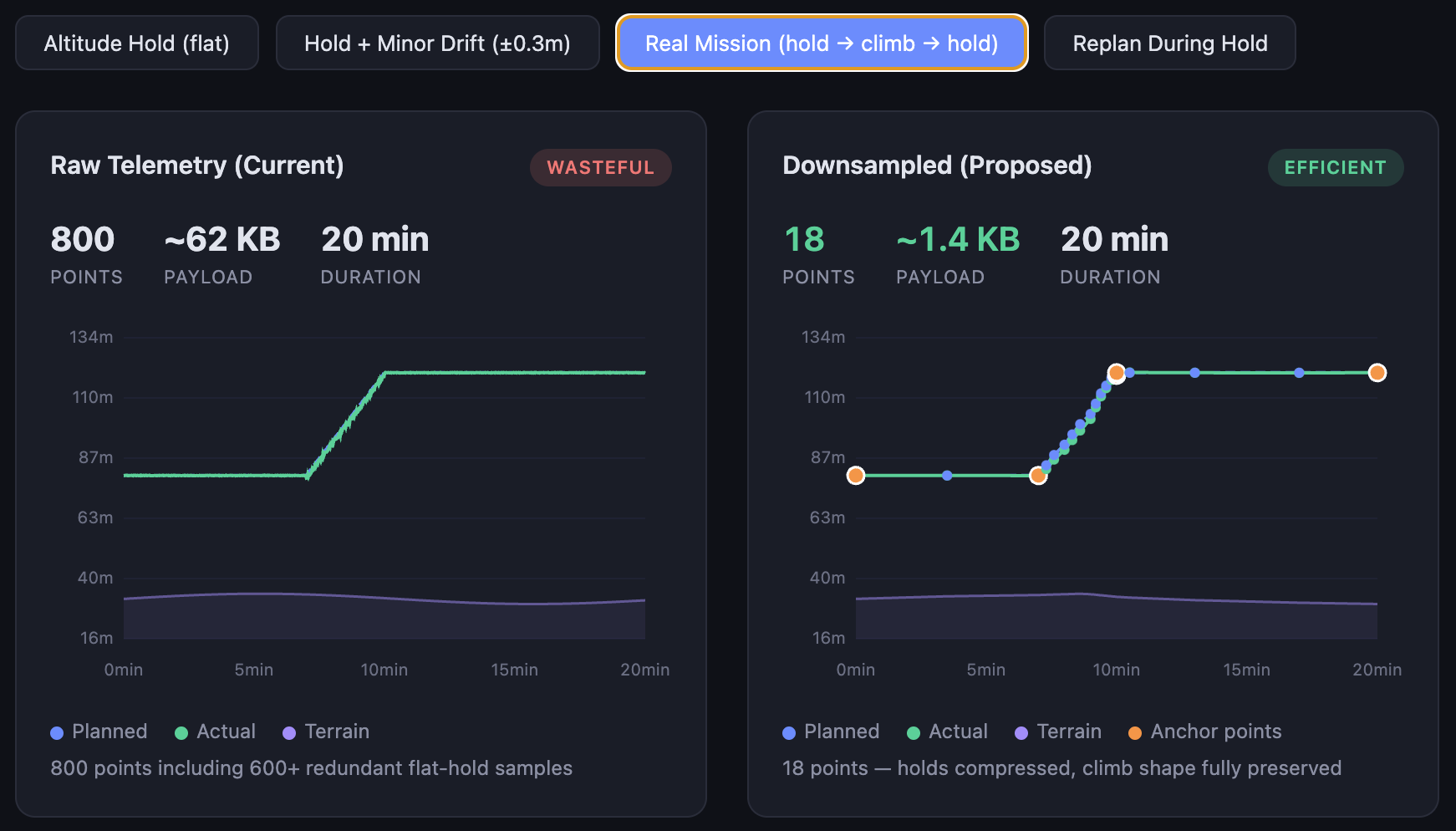

1) Primary Optimization: Downsampling Is Mandatory

For altitude graph series, server-side downsampling is required before response.

Chosen base algorithm:

- Ramer-Douglas-Peucker (RDP) in screen-space approximation (pixel-aware), not raw meter-space.

Mandatory anchors that must always be kept:

- segment start/end

plan_change_atpoints- mission-state transition points (warning/contingency enter/exit)

- first and last actual telemetry point in each segment

- local extrema (peak/valley) candidates for

actualandplannedseries

Pipeline:

- split by

plan_version_id - inject anchors

- run RDP between anchors only

- enforce

max_pointscap as final guard

Default parameters (v1):

epsilon_y_px = 0.75(vertical tolerance — sub-pixel on 2× DPR displays, ensuring no visible shape loss at standard retina resolution)epsilon_x_px = 0.5(horizontal tolerance — tighter than vertical because horizontal misalignment is more perceptible in time-series graphs)max_points = min(1200, max(300, 2 * viewport_width_px))(scales with screen width; 2× factor provides comfortable headroom above 1-point-per-pixel)

These values were derived from visual regression testing on representative 20-minute missions at 1920×1080 and 2560×1440 resolutions. They should be re-validated during Phase 2 parity testing and may be tuned per device_pixel_ratio.

Operational expectation:

- long near-flat drift intervals are compressed to boundary points (typically 2-4 points per interval)

- sharp altitude events and state transitions remain visible due to anchor pinning

Demo

2) Write Path Remains Strict SI

All writes/commands remain canonical SI units only.

No unit interpretation based on UI preference is allowed for mutation endpoints.

3) Response Unit Selection Is Presentation-Only

response_unit_system may be provided only for presentation endpoints.

Rules:

- Core CRUD/entity APIs always return SI.

- Presentation endpoints may return converted values.

- If unspecified, the default is metric.

- Response must echo the effective unit system.

4) Plan Revisions Are First-Class

Presentation response must contain ordered segments, each bound to a stable plan_version_id.

Rules:

- No cross-segment waypoint-index matching.

- Segment boundaries are explicit by time and distance.

- Actual telemetry points belong to the active plan version at sample time.

- Replan transition markers are part of the payload.

5) Every Unit-Bearing Series Is Self-Describing

Each returned series must include:

unit_systemunit_labelprecision_hint

Frontend must render labels and formatting guidance from payload metadata, not local assumptions.

6) Snapshot Freshness Is Mandatory

To prevent stale updates overwriting fresh data, response must include monotonic freshness keys.

Required:

snapshot_idgenerated_at

Frontend applies only newer snapshots.

7) Transport Decision: gRPC (Connect) Primary

For this product stack, the telemetry presentation endpoint will use gRPC over Connect as the primary transport.

Rationale:

- Current frontend already uses Connect/gRPC clients broadly for mission and telemetry domains.

- Strong schema and enum contracts fit unit-system metadata and segment semantics.

- Frequent update patterns and future push streaming are better aligned with gRPC than ad-hoc REST polling.

- Shared protobuf contracts improve multi-client parity (web/iOS/android).

REST position:

- Existing REST endpoints remain supported where they already exist.

- No new REST telemetry presentation endpoint is required for v1.

- If an external non-gRPC consumer appears, a REST facade can be added later without changing canonical backend processing.

Canonical Unit Policy

Canonical backend write units remain:

- Distance short/local: meters

- Distance long/route: kilometers

- Altitude: meters

- Speed: meters/second

- Acceleration: meters/second^2

- Angle/heading/orientation: degrees

- Temperature: Celsius

- Mass/payload: kilograms

- Battery percent: percent

- Battery voltage/current/capacity: V/A/Ah

- Duration: seconds

Presentation conversion target may be U.S. Customary where requested.

Contract (Normative Semantics)

Field names may change at protobuf/openapi layer; semantics below are mandatory.

Request

{

"mission_id": "string",

"from_time": "RFC3339 | optional",

"to_time": "RFC3339 | optional",

"max_points": 600,

"client_profile": "CLIENT_PROFILE_WEB | CLIENT_PROFILE_MOBILE | optional",

"viewport_width_px": 900,

"device_pixel_ratio": 2,

"series_mask": ["terrain", "planned", "actual"],

"response_unit_system": "UNIT_SYSTEM_METRIC | UNIT_SYSTEM_US_CUSTOMARY | UNIT_SYSTEM_UNSPECIFIED",

"include_segments": true,

"since_snapshot_id": "string | optional"

}Response

{

"meta": {

"snapshot_id": "string",

"generated_at": "RFC3339",

"effective_unit_system": "UNIT_SYSTEM_US_CUSTOMARY",

"default_precision_hint": 2

},

"segments": [

{

"segment_id": "string",

"plan_version_id": "string",

"start_time": "RFC3339",

"end_time": "RFC3339 | null",

"plan_change_at": {

"time": "RFC3339 | null",

"distance": 1.82

},

"series_meta": {

"distance": { "unit_system": "UNIT_SYSTEM_US_CUSTOMARY", "unit_label": "mi", "precision_hint": 2 },

"altitude": { "unit_system": "UNIT_SYSTEM_US_CUSTOMARY", "unit_label": "ft", "precision_hint": 0 }

},

"points": [

{

"time": "RFC3339",

"distance": 0.15,

"terrain": 45.2,

"planned": 140.0,

"actual": 132.4,

"contingency": null,

"warning": null,

"agl": 87.2,

"msl": 177.6

}

]

}

]

}Render-Only Delivery Model (Normative)

Goal: the client (web/mobile) receives only the data actually needed to render the current graph state.

“No Over-Delivery” Principle

The server must not send a raw telemetry stream when the client has requested a render-ready representation.

Mandatory rules:

- Points in the response are already downsampled for the current viewport.

- Points are bounded by

max_points(or the server-side cap ifmax_pointsexceeds the allowed maximum). - Only the requested series (

series_mask) are included in the response. - If no new snapshot has appeared, the server returns an empty delta with updated meta status rather than repeating the full array.

Parameters Affecting Response Volume

To adapt for web/mobile, the request must convey render context:

client_profile:

CLIENT_PROFILE_WEBCLIENT_PROFILE_MOBILE

viewport_width_pxdevice_pixel_ratiomax_pointsseries_mask:

terrainplannedactualcontingencywarning

Server Behavior by Client Profile (v1 defaults)

- WEB profile:

- target

max_points:600-1200(depending onviewport_width_px) - default cadence:

1s(or500msduring warning/contingency)

- MOBILE profile:

- target

max_points:250-500 - default cadence:

1s(or500msduring warning/contingency), with a stricter payload budget

Payload Budget Targets (SLO)

- WEB:

- p95 response size <=

180KBfor full refresh - p95 delta size <=

40KB

- MOBILE:

- p95 response size <=

80KBfor full refresh - p95 delta size <=

20KB

Incremental Update Semantics

since_snapshot_id must return only changes relative to the specified snapshot:

- New points in active segments.

- New or updated segment markers (e.g., replan boundary).

- Updated

meta(snapshot_id,generated_at).

If no changes have occurred:

- Response contains

metaconfirming current freshness. segments[].pointsmay be empty.

Segment Delivery Model: Full Refresh vs. Delta

The graph must always display the complete mission — all plan versions from takeoff to now. The delivery model has two modes:

Full refresh (first call, or since_snapshot_id omitted):

- Server returns ALL segments with ALL their (downsampled) points.

- FE builds the complete graph state from scratch.

- Payload budget: ≤ 180 KB web / ≤ 80 KB mobile (p95).

- Used on: graph open, page reload, error recovery, fallback.

Delta update (subsequent polls with since_snapshot_id):

- Server returns only segments that changed since the referenced snapshot.

- Unchanged segments are not re-sent — the FE keeps them in local state.

- Payload budget: ≤ 40 KB web / ≤ 20 KB mobile (p95).

- Used on: every 1s poll during flight.

What a delta may contain:

- New points appended to the currently active segment.

- A closed segment (its

end_timeset) if a replan occurred. - A brand-new segment (new

plan_version_id) after a replan. - Updated

metawith a newsnapshot_id.

FE merge rule: the frontend always holds ALL segments in memory (the full graph). Deltas are merged into this state — new points are appended, new segments are added, closed segments are updated. The result is that the FE always has the complete picture; only the wire payload is minimized.

Example polling flow:

1. FE opens graph → full refresh (no since_snapshot_id)

Server → FE: { segments: [seg1(280pts), seg2(150pts)], snapshot: "snap-047" }

FE state: [seg1 ■■■■■■■■ | seg2 ■■■■■] ← full graph

2. 1s later → delta poll

Server → FE: { segments: [seg2(3 new pts)], snapshot: "snap-048" }

FE state: [seg1 ■■■■■■■■ | seg2 ■■■■■■] ← 3 pts appended to seg2

3. 1s later → delta poll, nothing changed

Server → FE: { segments: [], snapshot: "snap-048" }

FE state: [seg1 ■■■■■■■■ | seg2 ■■■■■■] ← unchanged

4. Replan happens → next delta poll

Server → FE: { segments: [seg2(end_time set), seg3(new, 2pts)], snapshot: "snap-049" }

FE state: [seg1 ■■■■■■■■ | seg2 ■■■■■■ | seg3 ■] ← seg2 closed, seg3 startedWhy This Matters

- A single backend contract serves web and mobile without duplicating logic.

- Network and client CPU scale with the actual screen size and UX scenario.

- Eliminates transmission of thousands of surplus points that have no impact on the rendered result.

Graph Update Cadence (Normative)

The frontend must not call the presentation endpoint at an unconstrained rate.

Default Polling Cadence (v1)

- Mission

IN_PROGRESSorHOLD: call every1swithsince_snapshot_id. - Mission

CONTINGENCYorWARNINGactive: call every500mswithsince_snapshot_id. - Mission

ASSIGNED/READY(not flying): call every10s. - Mission

COMPLETED/TERMINATED: no periodic polling; fetch once on open and on manual refresh.

Rationale

1sduring standard flight keeps graph responsive while controlling backend QPS.500msduring warning/contingency preserves operator visibility in critical transitions.- Non-flying states do not require high-frequency refresh.

Frontend Scope Changes

Replace

- FE graph merge logic in

useFlyGraphDatafor altitude route assembly. - Worker merge dependency (

useFullGraphDataandgetFullRouteData) for this endpoint. - Graph unit-label dependence on global local-storage preference for server-series payload.

Keep

- Rendering components (

Graph, tooltip, markers). - Mission-state UI behavior (flying/contingency/warning visuals).

- SI write-path conversion helpers until mutation APIs are fully typed SI at form boundaries.

Add

- Telemetry presentation service client (

services/telemetry/...). - Adapter mapping server payload to existing graph prop model.

- Snapshot freshness guard in store update path.

- Feature-flag switch and rollback fallback.

Acceptance Criteria

Functional Correctness

- No UI regressions on high-density telemetry missions.

- Replan missions show correct segmented planned/actual traces.

- Cross-client numerical parity holds within agreed tolerances.

- Write APIs remain strict SI and pass existing mutation tests.

- Every unit-bearing series includes effective unit metadata.

- Stale snapshot responses never override fresher data in FE state.

Downsampling Fidelity (Gate Criteria)

- Point count is reduced by at least 70% on representative missions (baseline: ~3,200 → target: <960) without visually significant shape loss. This is the primary gate criterion.

- As a secondary validation: a 20-minute near-flat drift interval produces ≤4 points while preserving segment boundary correctness.

Performance Budgets

- Response payload stays within p95 budget targets: 180 KB web full / 40 KB web delta / 80 KB mobile full / 20 KB mobile delta.

Risk Register

- Backend CPU increase. Mitigation: bounded

max_points, caching, pre-aggregation. - Schema complexity. Mitigation: versioned contract + consumer-driven tests.

- Rollout regressions. Mitigation: feature flag + dual-path parity + replay.

- Partial replan semantics. Mitigation: block rollout until

plan_version_idsegmentation is fully implemented.

Consequences

Positive

- Single source of truth for graph data — Conversion formulas, downsampling, and plan segmentation live in one place (server). Bug fixes propagate to all clients immediately.

- Reduced FE complexity — The altitude graph frontend becomes a thin rendering client.

useFlyGraphDatapipeline (~800 LOC including worker code) can be retired. - Better operator safety during replans — Plan-version segmentation eliminates a class of index-remap bugs that could show incorrect altitude traces during live flight.

- Smaller payloads — 70%+ reduction in points per response directly reduces bandwidth and parse time, especially on web.

Negative

- Backend team now owns graph-shape correctness — Bugs in downsampling or segment boundary logic require backend deployment to fix. FE cannot patch around them locally.

- New endpoint coupling — The FE altitude graph is now hard-dependent on the presentation endpoint. If the endpoint is down or slow, the graph is entirely unavailable (mitigated by dual-path fallback in Phase 1–2).

- Increased backend compute — Server-side RDP and unit conversion add CPU per request. Must be monitored and bounded (see Risk Register).

- Schema evolution overhead — Any change to graph series or segment semantics requires coordinated protobuf schema updates across FE and BE.

Alternatives Considered

- Patch existing FE pipeline only. Rejected: addresses performance partially but does not solve cross-platform formula duplication or replan segmentation. Was implemented as a stopgap (memoization and throttling in

useFlyGraphData); this ADR replaces that approach. Estimated effort: ~2 weeks FE-only, but yields single-platform benefit and leaves consistency gap open. - FE WASM math acceleration. Rejected: reduces compute time on the client but does not reduce payload volume (~450 KB still transferred). Does not address multi-client contract drift (iOS/Android would still need their own implementations). Estimated effort: ~6 weeks for WASM module + integration, with ongoing maintenance burden across platforms.

- Unit-aware writes (accept user-preferred units in mutation APIs). Rejected: introduces ambiguity in write paths — a mutation with

altitude: 400could mean meters or feet depending on a header/parameter. This is a flight-safety risk. All mutation APIs must remain unambiguous canonical SI.

Rollback Plan

- Disable server-series feature flag.

- Revert to legacy FE graph pipeline.

- Capture and replay failing snapshot payloads for root-cause.

Open Questions

Blocking for v1 (must resolve before Phase 1 implementation)

- Segment point model: pre-aligned terrain per point vs. separate terrain track with index mapping. Affects protobuf schema and downsampling pipeline design.

- Contingency/warning representation: separate series vs. state-tagged actual series. Affects

series_masksemantics and FE adapter shape.

Deferred (can resolve during Phase 2 or later)

- Whether true push transport (SSE/WebSocket) is required beyond v1, or polling +

since_snapshot_idis sufficient long-term. - Final parity tolerances by metric (distance, AGL, MSL, boundaries) — to be derived from Phase 1 parity comparison data.

- Production tuning for

epsilon_x_px/epsilon_y_pxby viewport and device pixel ratio — to be refined during Phase 2 visual regression testing.

Links

[Uncrew Apollo Altitude Graph: Architecture and Dynamic Plan Update Strategy Overview](confluence-title://UE/Uncrew Apollo Altitude Graph: Architecture and Dynamic Plan Update Strategy Overview)